Core AI Visibility Metrics: Definitions and Formulas

AI visibility metrics measure how your brand is represented inside AI-generated responses across platforms like ChatGPT, Perplexity, Google AI Overviews, and Microsoft Copilot. Unlike traditional SEO metrics that track your position in a list of links, these metrics capture presence in synthesized answers where there may be no link at all.

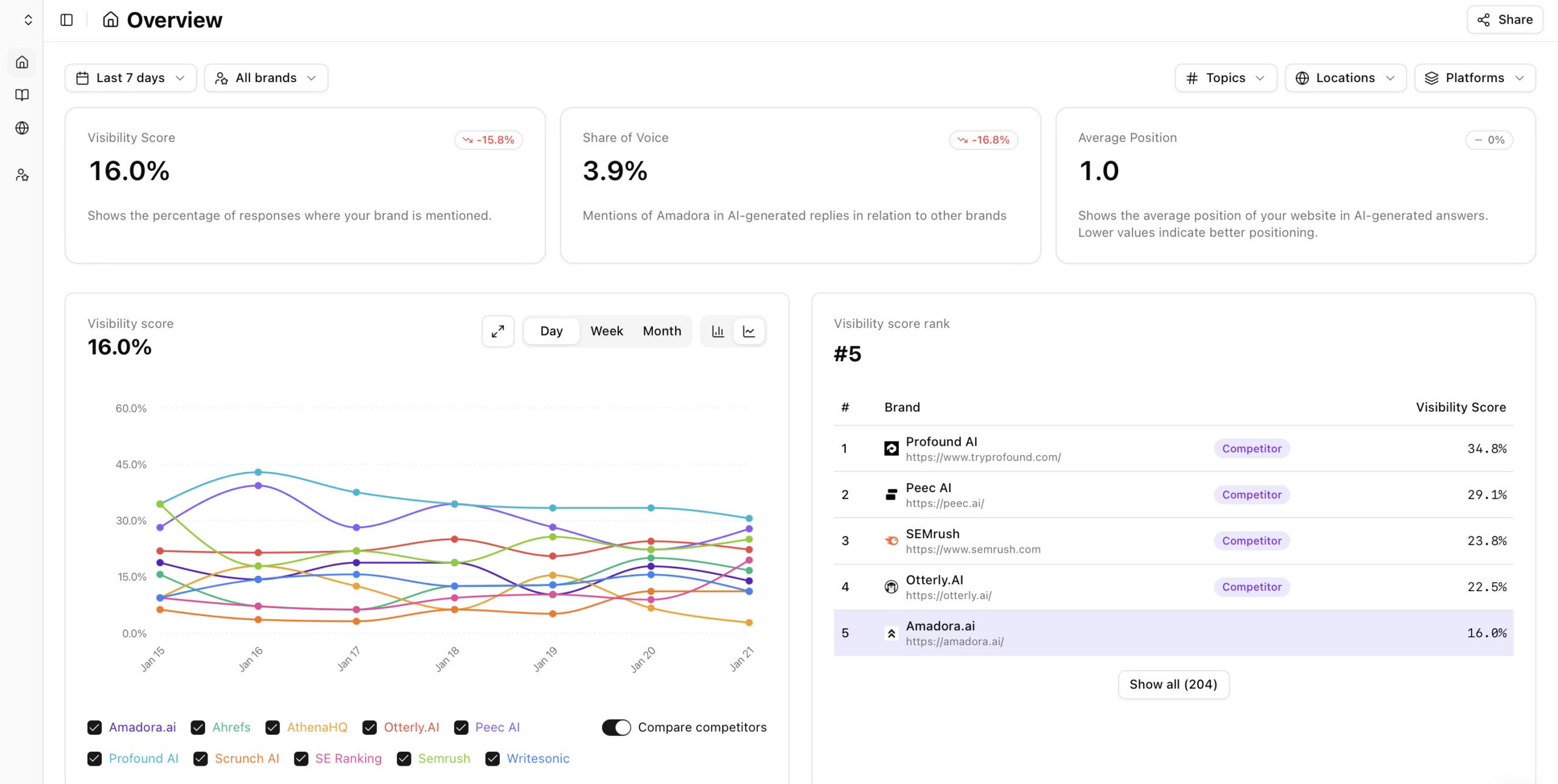

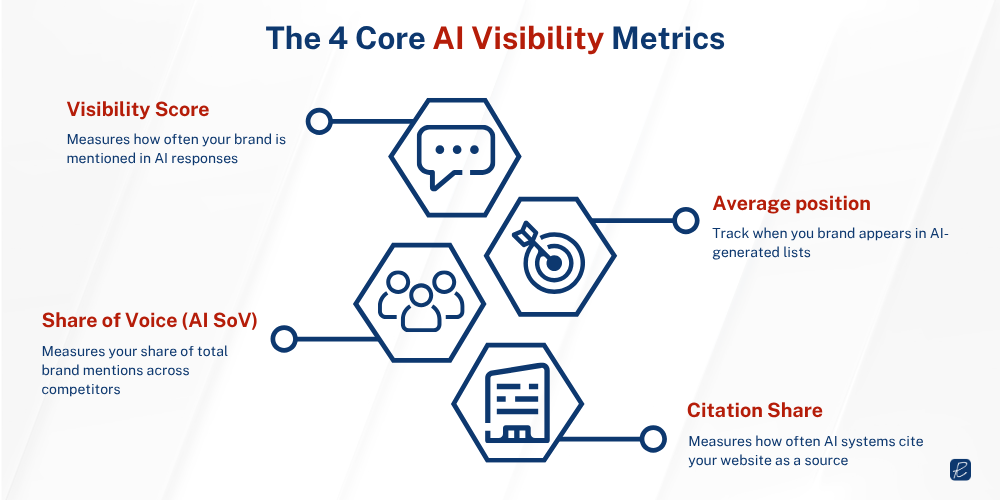

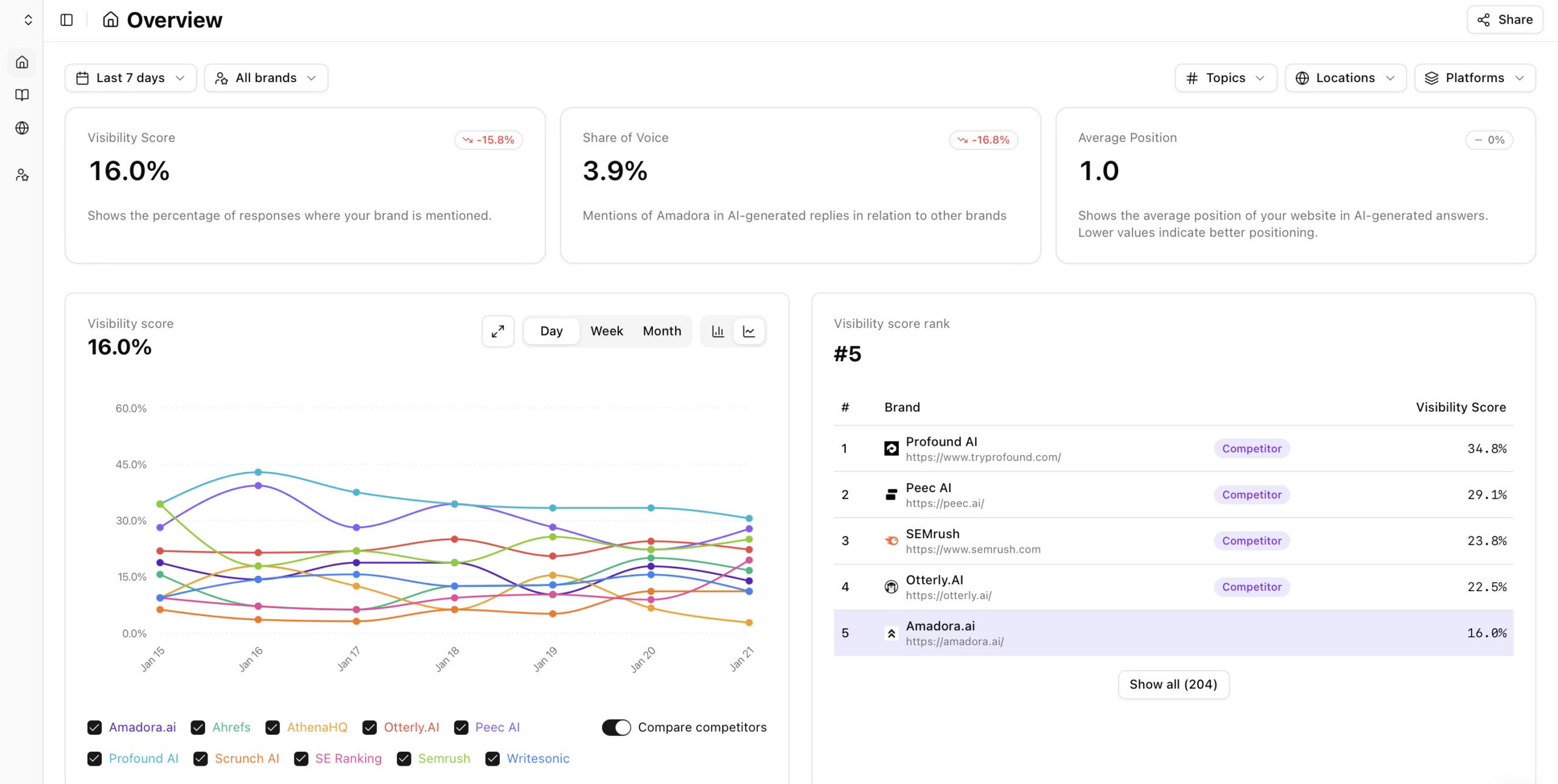

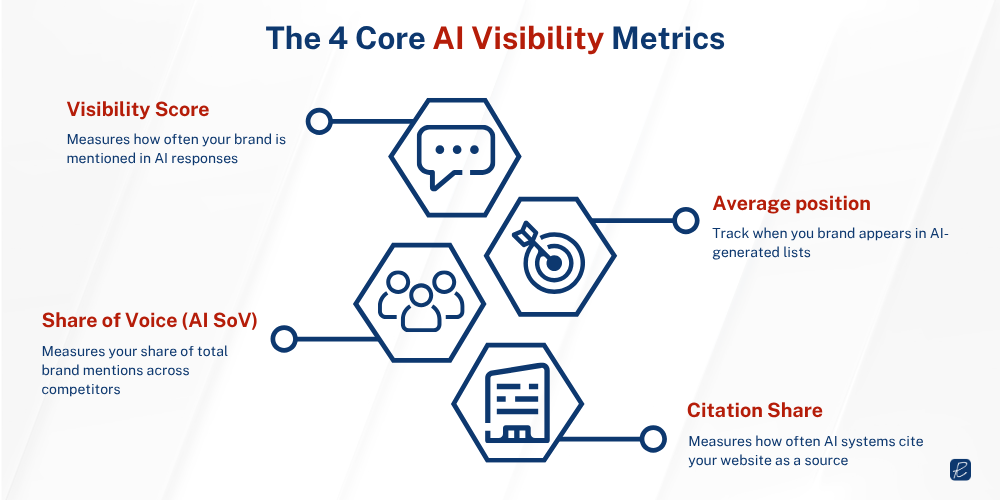

We use Amadora as our primary AI visibility tracking tool. Amadora runs your monitored prompts across AI engines in real time and generates four core scores: Visibility Score, Share of Voice, Average Position, and Citation Share.

This measurement layer is the foundation of how we track AI visibility across the AI Visibility Optimization program.

Each measures a different dimension of your brand’s AI presence.

Each measures a different dimension of your brand’s AI presence.

1. Visibility Score

The Visibility Score is your baseline metric for AI brand presence. It answers a binary question: when a user asks a relevant question, does the AI mention your brand at all? Amadora calculates this by running your monitored prompts through AI engines and counting the responses where your brand name appears.

Formula: (Total Responses Mentioning You ÷ Total AI Responses Generated) × 100

If Amadora runs a prompt 10 times and your brand appears in 4 answers, your Visibility Score is 40%.

There is no universal standard for this calculation yet because tools define prompt sets differently and weight AI engines differently. What matters is consistency: the same prompt set, the same platforms, and the same methodology over time so the score functions as a reliable trendline. A single snapshot tells you almost nothing. A score tracked monthly across six months shows whether your content strategy is building AI presence or losing ground.

For B2B brands in niche verticals, a 40% Visibility Score for high-intent commercial prompts such as ‘best [category] software for enterprise security teams’ is more strategically meaningful than an 80% score across generic category terms. Build your prompt set around where buyers actually make decisions, not just where search volume is highest.

Your Visibility Score will also vary significantly by platform. Citations on Google AI Overviews are known to fluctuate frequently, and a brand’s score on ChatGPT and Perplexity can differ by 20 percentage points or more for the same query set. Platform-specific tracking is not optional if you want to understand where you actually stand.

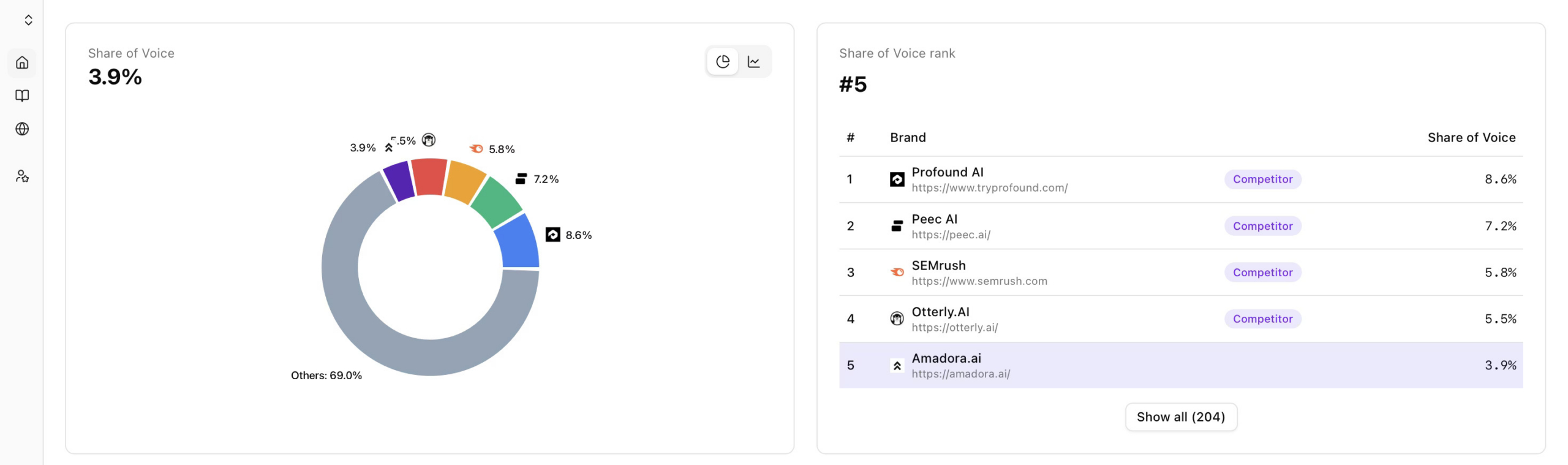

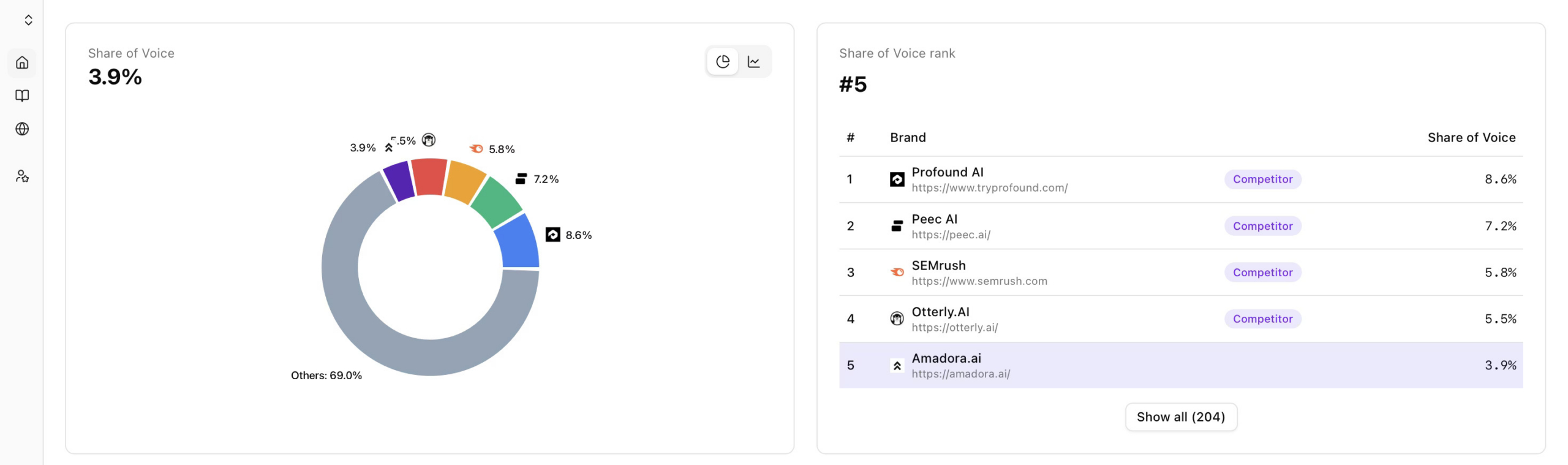

2. Share of Voice (AI SoV)

AI Share of Voice is the most strategically significant metric in this framework because it captures competitive position. AI SoV measures what percentage of total brand mentions across a defined prompt set belong to your brand, compared to all competitors mentioned in those same responses.

Formula: (Your Mentions ÷ Total Mentions of ALL Brands) × 100

Amadora counts every brand mention in the answers, including yours and your competitors’, to build a total mentions pool. If there are 200 total brand mentions in a week and your brand accounts for 100 of them, your AI SoV is 50%, regardless of how often Competitor A (75 mentions) or Competitor B (25 mentions) appear in the same responses.

There are two variants worth distinguishing:

- Entity-based AI SoV tracks how often your brand appears as a recommended entity in answers to prompts like ‘recommend,’ ‘best,’ or ‘top providers.’

- Citation-based AI SoV tracks how often your content is cited as a source.

Entity-based captures recall, meaning how well AI models associate your brand with your category. Citation-based captures influence, which signifies how much AI trusts your content as a reference point.

For B2B brands, both matter at different stages of the buying process. A CFO asking Perplexity ‘what are the best spend management platforms for mid-market companies’ is an entity-based query. A procurement team asking ‘how should we evaluate spend management vendors’ is a citation-based opportunity. Tracking only one type gives you an incomplete picture.

AI SoV is a C-suite metric. Unlike page rankings, which require translation for non-marketing leadership, AI SoV maps directly to language executives already use: market share. If your category generates 1,000 relevant AI responses per month and your brand appears in 180 while your primary competitor appears in 340, you have a quantified gap and a clear strategic case for investment.

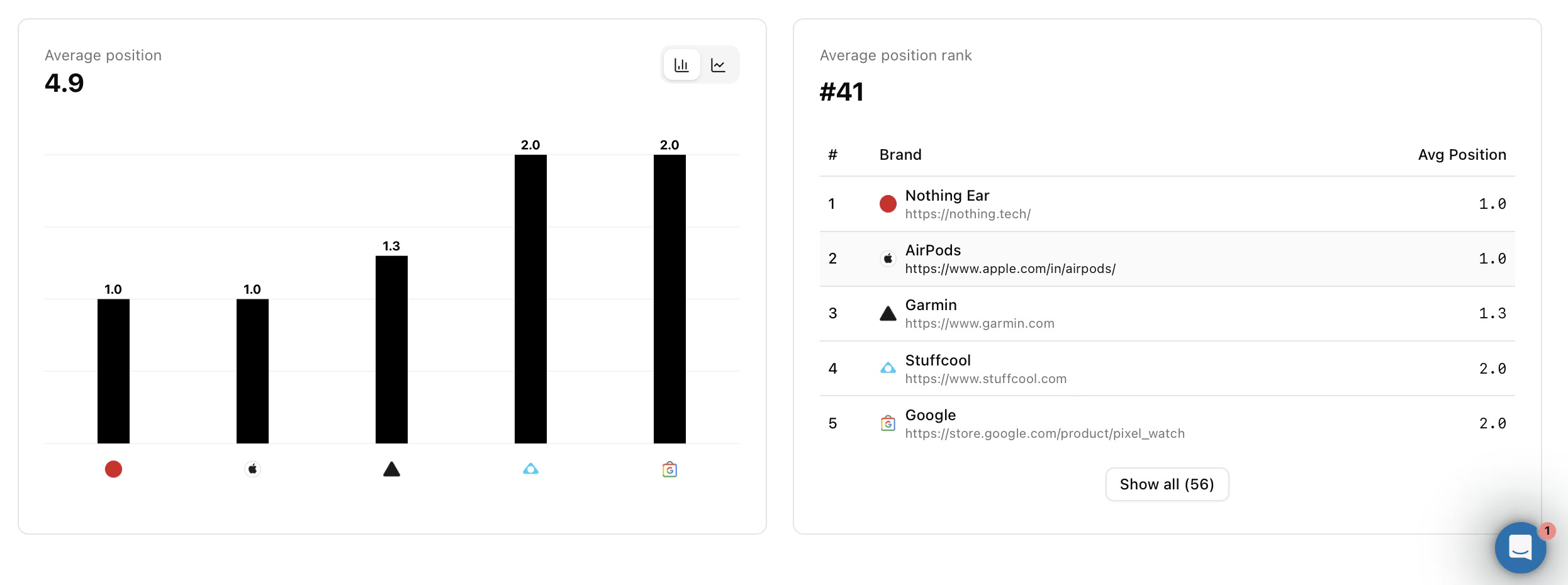

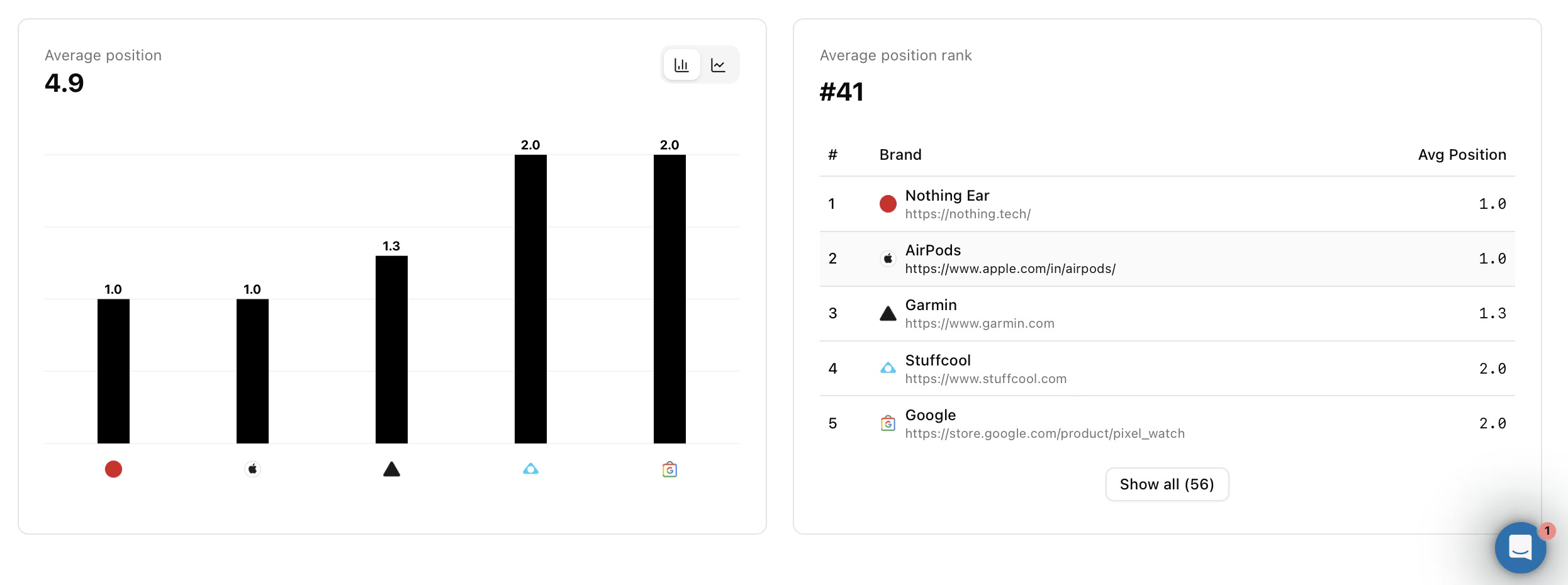

3. Average Position in AI Answers

AI models often provide recommendation answers in list format. For example, in a prompt like ‘what are the top 5 CRM platforms for mid-market teams’. Average Position tracks exactly where your brand lands on that list. Unlike Visibility Score and Share of Voice, a lower number is better here: Position 1 means you are the first recommendation.

Formula: Sum of Position Rankings Across All Mentions ÷ Total Mentions

This metric is harder to track than pure mention frequency because it requires parsing the structure of AI responses, and not only detecting brand presence. Amadora AI typically assigns numerical position values (1st mention = position 1, 2nd mention = position 2) and averages them across a prompt set.

The reason to track this metric is that AI answers are not neutral lists. When ChatGPT or Perplexity assembles a response recommending vendors, the first brand mentioned tends to receive more weight in the reader’s consideration.

For B2B brands with longer sales cycles, position in AI answers also correlates with shortlisting behavior. A buyer who sees your brand as the first recommendation in three consecutive AI responses is in a different consideration state than one who sees you mentioned seventh, for example. Tracking this over time can reveal whether your content improvements are moving you toward the front of AI recommendations or keeping you in the long tail of mentions.

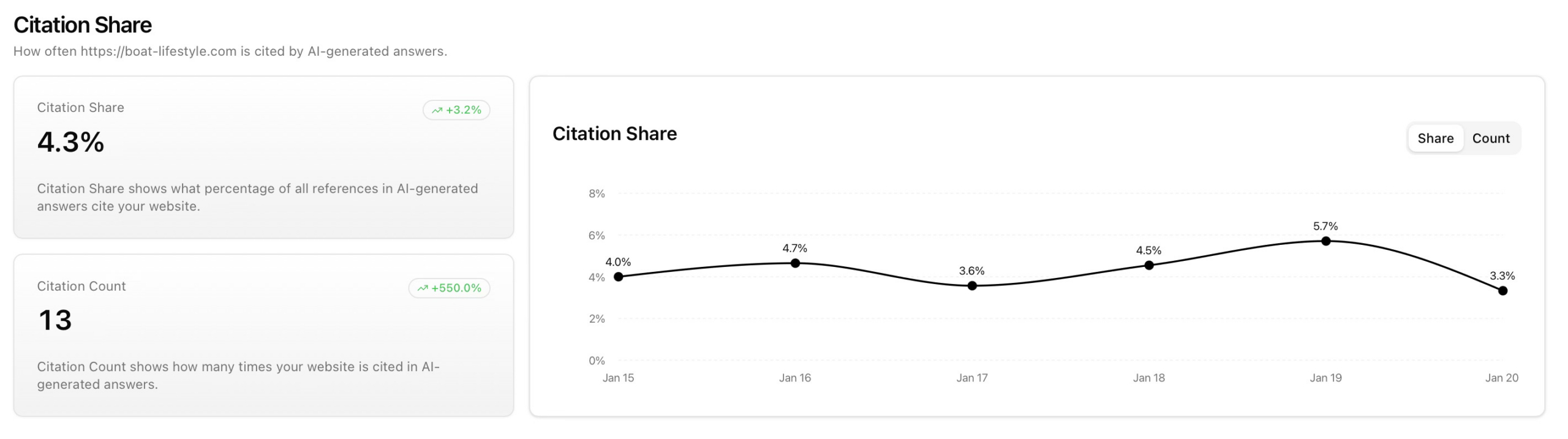

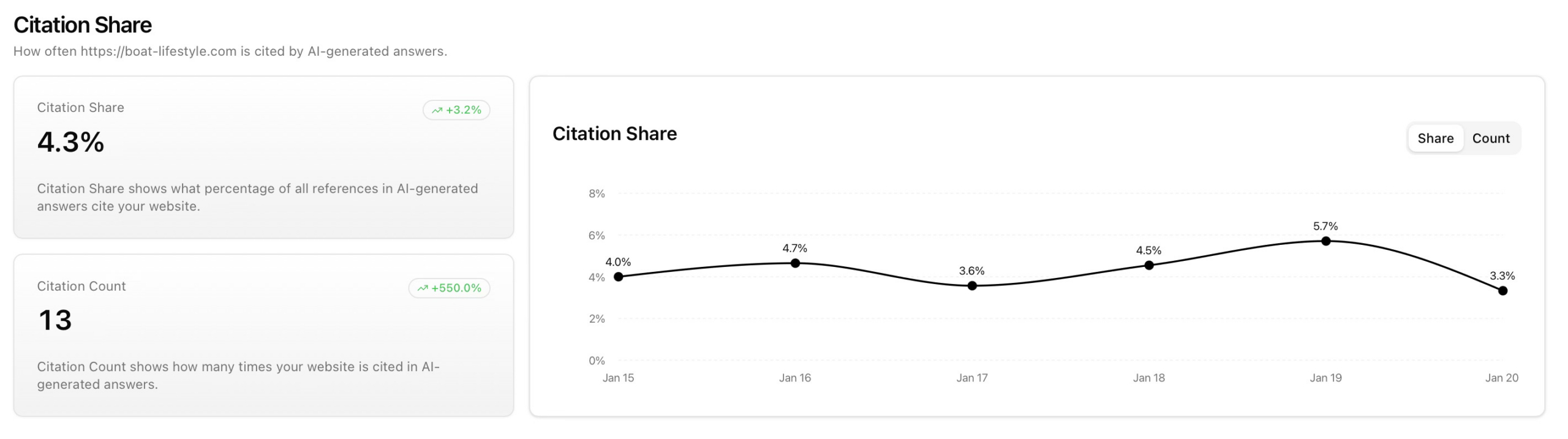

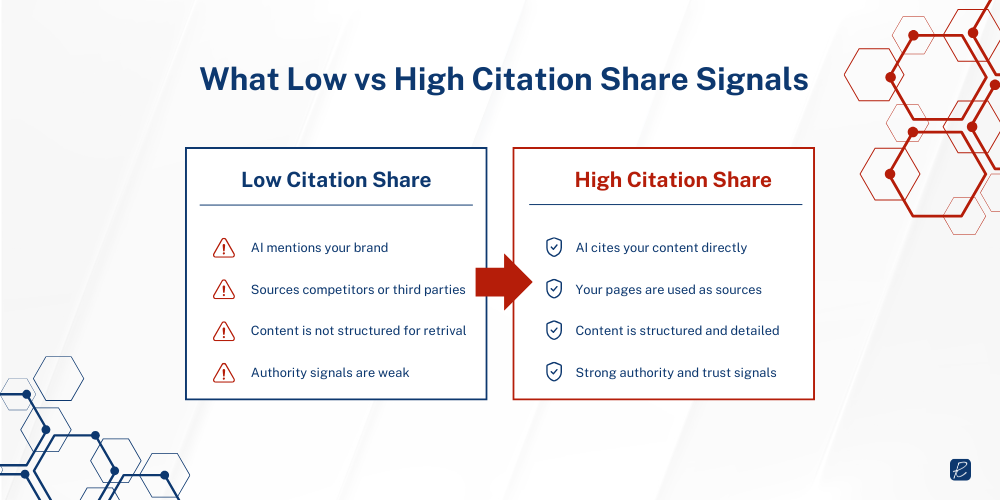

4. Citation Share

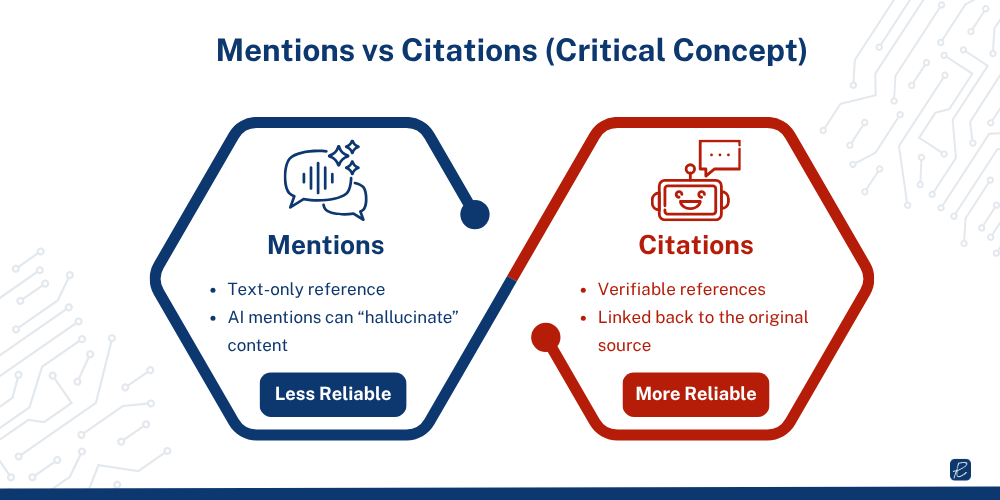

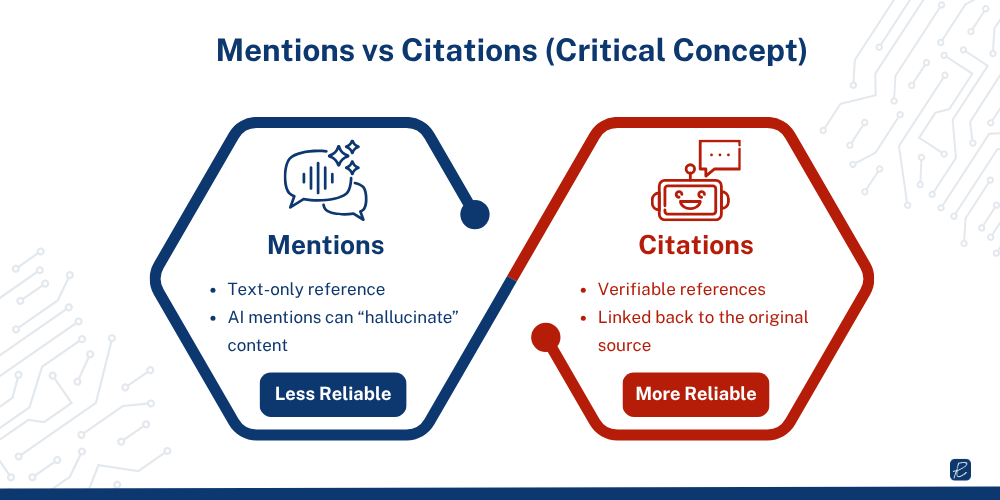

Citation Share is arguably the most actionable metric for driving traffic from AI. It measures how often AI platforms link directly to your website as a source of truth, rather than just mentioning your brand name by association. A mention means the AI knows your brand exists. A citation means the AI trusts your content enough to link to it as the basis for its answer.

Formula: Your Domain Citations ÷ Total Citations in Generated Answers × 100

Amadora analyzes the footnotes, ‘Learn More’ sections, and embedded links in every AI answer and calculates what percentage of all citations in those answers point specifically to your domain. The dashboard shows exactly which pages are being cited, and this directly informs your content strategy.

The distinction between mentions and citations has direct operational implications. A mention builds awareness. A citation drives clicks from buyers who are already in an evaluation mindset when they arrive at your site – the qualification has already happened inside the AI response.

Brands that earn both citations and mentions resurface more consistently across multiple AI response runs than brands that earn mentions alone.

Platform citation behavior varies substantially. Perplexity typically includes more source citations per response than ChatGPT, which means your Citation Share score will differ by platform. This is why Amadora tracks citation behavior separately across AI engines rather than reporting a single blended number.

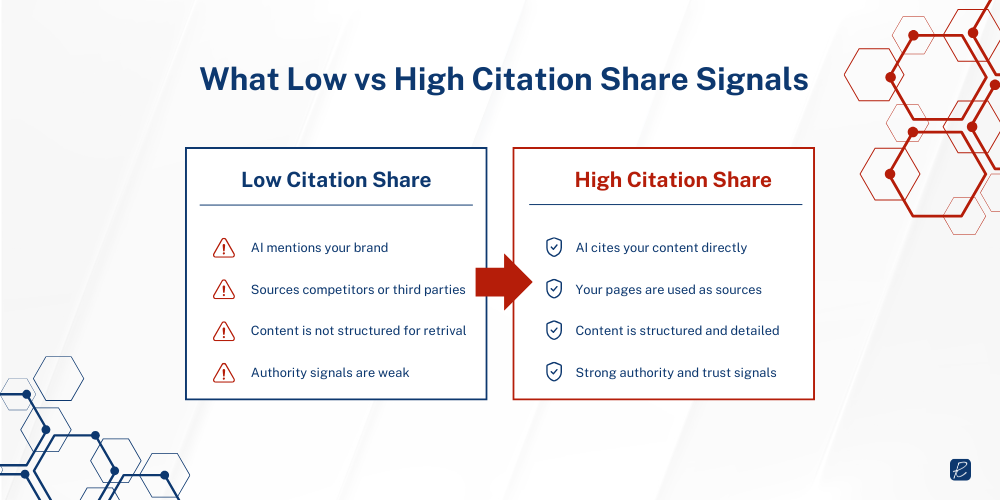

If your Citation Share is low relative to your Visibility Score, the gap implies that the AI knows your brand name but is sourcing its information from third-party reviews or competitors’ content rather than your own site. That pattern signals a technical content problem that either your pages are not structured in a way that AI can efficiently retrieve, or the depth and authority of your owned content needs to improve.

For B2B marketing teams, Citation Share is one of the strongest leading indicators of pipeline quality from AI search. When a buyer reads an AI Overview that cites your white paper on vendor evaluation criteria, your brand enters the consideration set with credibility attached. That is a different type of awareness than a brand mention with no source attached. Improving Citation Share directly increases the likelihood of qualified AI-driven traffic.

Traditional SEO Metrics vs. AI Visibility Metrics

The most important structural difference is what each framework measures. Traditional SEO measures your position in a ranked list that a human chooses to click or not click. AI visibility measures your presence in a synthesized answer that a human reads and acts on without necessarily clicking anything.

A second major distinction is the unit of measurement. Traditional SEO measures performance at the keyword level. AI visibility measures performance at the prompt level, and prompts in B2B contexts are conversational, variable, and intent-driven in ways that keyword-level tracking was never designed to capture. ‘What are the best HRIS platforms for companies in regulated industries?’ is not a keyword. It is a decision-stage query that triggers AI answers, and your brand either appears in those answers or it does not.

Backlink count and domain authority have partial relevance in AI search but are not directly transferable as trust signals. AI engines evaluate trust through citation signals, entity consistency, third-party mentions in credible sources, and content depth. A brand with 50,000 backlinks and shallow category pages can be outperformed in AI answers by a brand with a fraction of the link profile but well-structured, deeply researched content the AI can actually retrieve and use.

The table below maps the core traditional SEO metrics to their AI visibility equivalents:

| Traditional SEO Metric |

Equivalent AI Visibility Metric |

| Keyword Rankings |

AI Visibility Score / Average Position in AI Answers |

| Organic Click-Through Rate (CTR) |

Citation Frequency (clicks are often zero even when cited) |

| Organic Traffic / Sessions |

AI Referral Traffic + Brand Mention Rate |

| Domain Authority / Backlink Count |

Citation Authority (quality of sources citing your content in AI answers) |

| SERP Feature Visibility (featured snippets) |

AI Share of Voice across prompt set |

| Impression Count (Search Console) |

Prompt Coverage Rate (how many relevant queries trigger your brand) |

| Page-Level Rankings |

Prompt-Level Brand Presence |

| Social Listening Sentiment |

AI-Generated Sentiment (how AI frames your brand in answers) |

Traditional SEO and AI visibility metrics are not in competition with each other. Traditional SEO measures performance in channels it was designed for, which no longer account for the full picture of how B2B buyers discover vendors. Running both measurement systems simultaneously is not convenient, but it is necessary.

Strategic Implications for B2B Marketing

Organic rankings still drive direct traffic for branded and commercial-intent queries. AI answers increasingly shape how buyers frame their initial problem, define category criteria, and build shortlists. Abandoning either measurement layer leaves you blind to a significant portion of what drives pipeline. The strategic implications break down into four areas:

1. Reporting must evolve beyond traffic

Traditional dashboards showing sessions, CTR, and rankings are measuring the portion of buyer research that happens after they have already formed initial opinions from AI. If your board is reviewing marketing performance through these metrics alone, they are looking at a lagging indicator of brand discovery. AI Share of Voice, citation frequency, and AI sentiment need to become part of the conversation at the leadership level. This is where most B2B organizations identify gaps between reported performance and actual buyer discovery behavior.

2. Content strategy must serve AI retrieval, not just keyword density

AI engines retrieve content through a process called Retrieval-Augmented Generation (RAG), which prioritizes relevance, recency, and trust. Content that answers specific questions directly, uses clear structure, demonstrates genuine subject matter expertise, and earns citations from reputable third-party sources performs well in AI retrieval. Generic pillar content written for keyword clusters does not. For B2B brands, this means original research, detailed use-case guides, and comparison frameworks are higher priority than they have traditionally been. Structured, well-defined content consistently outperforms keyword-driven content in AI retrieval environments.

3. Third-party presence is now a first-party problem

AI models form their understanding of your brand from the full ecosystem of content that references you: G2 and Capterra reviews, industry publication mentions, Reddit discussions, LinkedIn posts, and news coverage. A brand with excellent owned content but thin third-party presence will underperform in AI visibility relative to its content quality. This makes PR, community building, and review generation strategic inputs to AI visibility, and no longer peripheral activities.

4. Attribution requires a new model

Traditional last-touch and even multi-touch attribution models were not built to capture the influence of an AI Overview that a buyer read three weeks before requesting a demo. Most B2B buyers use AI to research, gather, or summarize information before engaging with vendors directly.

This means AI-assisted pipeline often looks like direct traffic, branded search, or unexplained high-intent inbound when viewed through a traditional attribution lens. Adding a ‘How did you first hear about us?’ field with AI platform options on your lead capture forms, and tracking brand search volume trends alongside AI visibility metrics, provides a more honest picture.

One data point that B2B CMOs should internalize when making the case for AI visibility investment: 90% of B2B buyers click through to cited sources in AI Overviews to validate the content before acting, according to TrustRadius research. This means citation in AI answers is not only an awareness play. It drives qualified traffic from buyers who are already in an evaluation mindset when they arrive at your site. The qualification happens before the click.

Each measures a different dimension of your brand’s AI presence.

Each measures a different dimension of your brand’s AI presence.