1. Amadora – Best for SEO Agencies Managing Multiple Clients

Pricing: Starter $59/mo · Professional $179/mo · Agency $499/mo (monthly) | $49/$149/$399 (annual)

Free trial: 7 days, 150 credits, no credit card required

Engines tracked: ChatGPT, Perplexity, Gemini (all plans) + Google AI Overviews, Microsoft Copilot, Grok (add-ons from $9/mo)

Best for: Agencies running GEO as a billable service and enterprise users

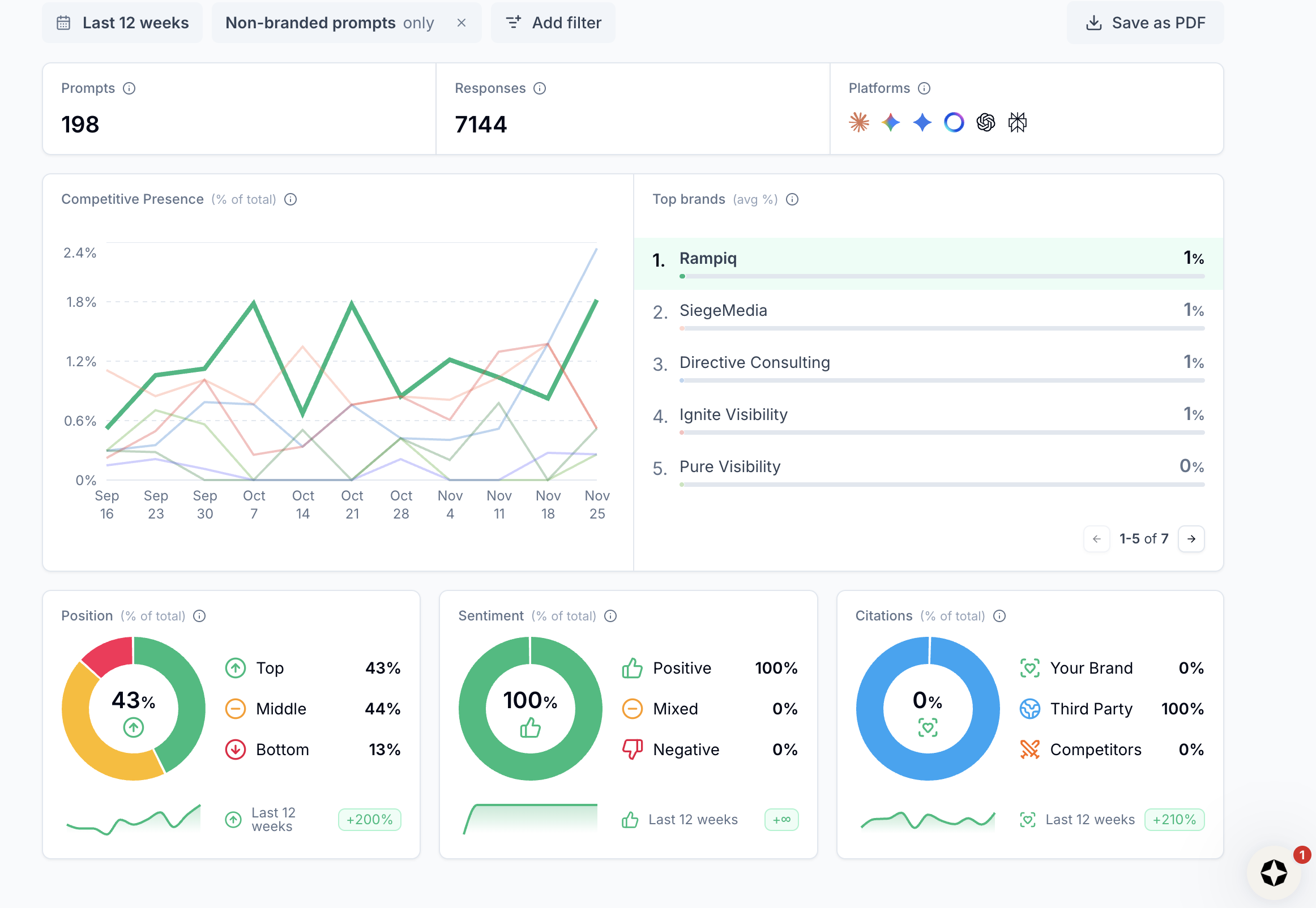

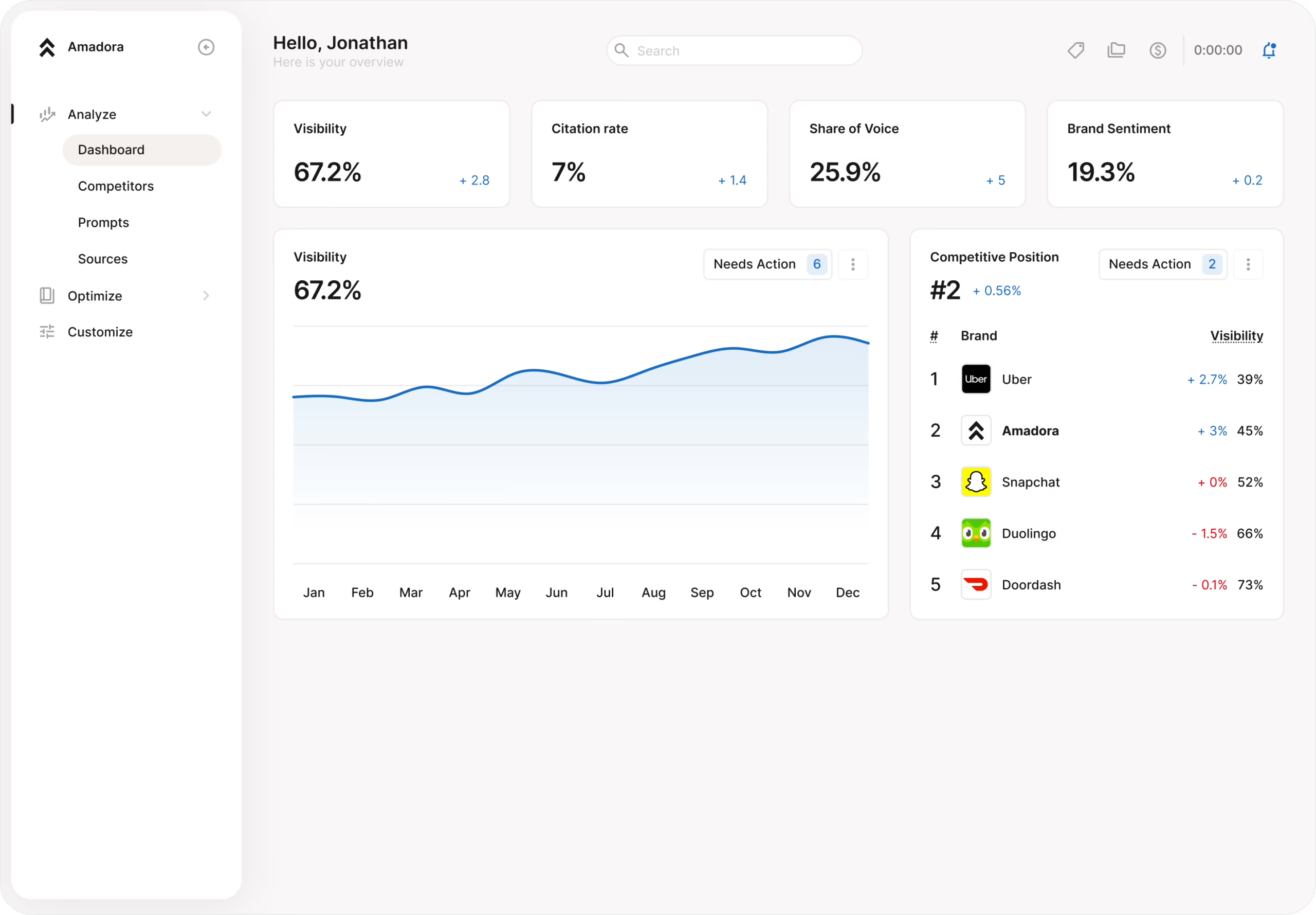

We use Amadora as our primary AI visibility infrastructure at Rampiq, so we’ll be transparent about that upfront. We use it because it solves the exact problems that make AI visibility work difficult to productize as an agency.

What Amadora actually does well

Amadora appears to have been built agency-first, which sounds like marketing language until you see what it means in practice. You can manage unlimited client brands under one subscription, allocate prompts per client, invite team members, and export white-label reports that go straight into client decks. That workflow doesn’t exist at this level in most competing tools, which feel like they were designed for a single brand and awkwardly retrofitted for agencies.

The data collection methodology is one of the things that sold us. Amadora scrapes real AI engine interfaces rather than hitting the API, and each prompt run is isolated, meaning previous conversation history and memory can’t skew results. When you’re reporting to a client that their brand appeared in X% of relevant ChatGPT responses, you need that number to reflect what a real user would actually see. That isolation gives you the confidence to stand behind the data in a client meeting.

The prompt suggestion feature is underrated. Instead of starting from a blank slate trying to guess what your clients’ customers are asking AI assistants, Amadora surfaces likely prompts based on the brand and category. For a new client onboarding, this cuts setup time significantly.

The citation gap analysis is where the tool earns its keep for GEO strategy. You can see not just where your client is being cited, but which URLs the AI engines are pulling from, where competitors appear that your client doesn’t, and which geographic markets are underperforming. That’s the data you need to build a content, PR, and link-building brief that’s actually connected to AI visibility outcomes.

The Optimization Plan feature (powered by credits) generates a prioritized action plan from the audit data, including specific recommendations like “get listed on these publications” or “optimize this FAQ section for Perplexity.” Unlike some GEO trackers we tested which provide a generic checklist, Amadora’s insights are scoped to the client’s actual gaps. This is what turns a monitoring tool into a service you can bill for.

What we found in testing

Across our eight client accounts, Amadora’s prompt-level data was the most consistently corroborated by manual spot-checks. When we ran the same prompts manually in ChatGPT and Perplexity and compared them to Amadora’s reporting, the match rate was high enough for us to trust the data in client reports. The citation source data (surfacing the actual URLs that AI engines were drawing from) was the feature we referenced most often when building GEO action plans.

Multi-geo functionality also held up in practice. For clients with European and North American audiences, being able to segment prompt performance by country without additional cost was a meaningful operational advantage.

Where it falls short

The Starter plan’s 15 prompts run out fast once you’re doing real monitoring. You’ll want the Professional plan at a minimum, which is $179/mo. Compared to SE Ranking’s bundled AI visibility included in a ~$119/mo plan, the standalone cost is higher. The trade-off is depth and agency workflow functionality, though.

Our verdict

If you run GEO and AI visibility as a client service, or want to, Amadora is the most coherent platform we’ve found for that specific workflow. The combination of multi-client management, real-interface data collection, citation gap analysis, and client-ready deliverables isn’t replicated at this price point elsewhere.

2. Google Analytics 4 (GA4) – The Essential Traffic and Conversion Layer

Pricing: Free

Engines tracked: Measures referral traffic from any AI engine that sends trackable sessions

Best for: Every team – this is the non-negotiable foundation before any paid tool is introduced

GA4 belongs in this comparison even though it’s not a prompt-tracking tool, because it answers a question none of the dedicated trackers can: is AI visibility actually driving business outcomes?

You can have your brand appearing in 80% of tracked ChatGPT prompts and still have no idea whether that’s generating traffic, leads, or pipeline. GA4 closes that gap. It’s the only tool in this stack that connects AI presence to actual user behavior in terms of website sessions, engagement, conversions, and assisted conversions.

How we set it up

The core technique is a custom AI referral segment using a regex pattern that captures traffic from all major AI platforms:

(chat\.openai\.com|chatgpt\.com|perplexity\.ai|you\.com|gemini\.google\.com|copilot\.microsoft\.com|phind\.com|search\.brave\.com|arc\.net)

This gets applied as a segment filter on session source. We build this into a Looker Studio dashboard for clients – a calculated field bucketing Session source into “AI” vs. “Non-AI” – and update the regex monthly as new AI referrers emerge.

We also run a second exploration filtered to Session medium contains referral with Referrer added as a secondary dimension. This confirms you’re actually seeing rows like chatgpt.com / referral and perplexity.ai / referral, rather than misattributed direct traffic.

What we found in testing

Two things consistently surprised clients when we first showed them this data. First, Perplexity typically drove more measurable referral traffic than ChatGPT, because Perplexity is more citation-forward in its UX and actively encourages users to click through to sources. Second, AI-referred sessions had notably higher engagement rates than the site average on several accounts, suggesting that users arriving from AI citations often had high commercial intent.

The limitation is that GA4 only captures what AI engines directly refer. Brand searches triggered by AI exposure (where a user sees a recommendation in ChatGPT, then searches the brand name in Google) show up as organic branded traffic, not AI referrals. Tracking brand search lift over time, comparing before and after significant AI visibility changes, helps account for this. We detail that methodology in our AI visibility measurement guide for GA4.

Where it falls short

GA4 tells you that AI is sending traffic to a given page. It doesn’t tell you which prompts triggered those visits, what the AI said about you, or why your competitor is getting cited more. It’s the business impact layer, not the diagnostic layer. It works alongside the prompt-tracking tools, not instead of them.

Our verdict

Start here before you spend money on anything else. If GA4 shows you zero or negligible AI referral traffic, that’s the first problem to solve.

3. Scrunch AI – Best for Prompt-Level Citation Visibility and Source Intelligence

Pricing: From $300/mo (Starter: 3 users, hundreds of custom + industry prompts). Growth, Pro, and Enterprise tiers add more prompts, personas, page audits, and dedicated support.

Engines tracked: ChatGPT, Gemini, Perplexity, Claude

Best for: B2B brands and mid-market teams ready for a structured monthly GEO operating rhythm

Scrunch is purpose-built for understanding how your brand appears inside AI-generated answers at the prompt level, and it goes further than most competitors by helping you understand why AI systems are making the choices they make.

What Scrunch does well

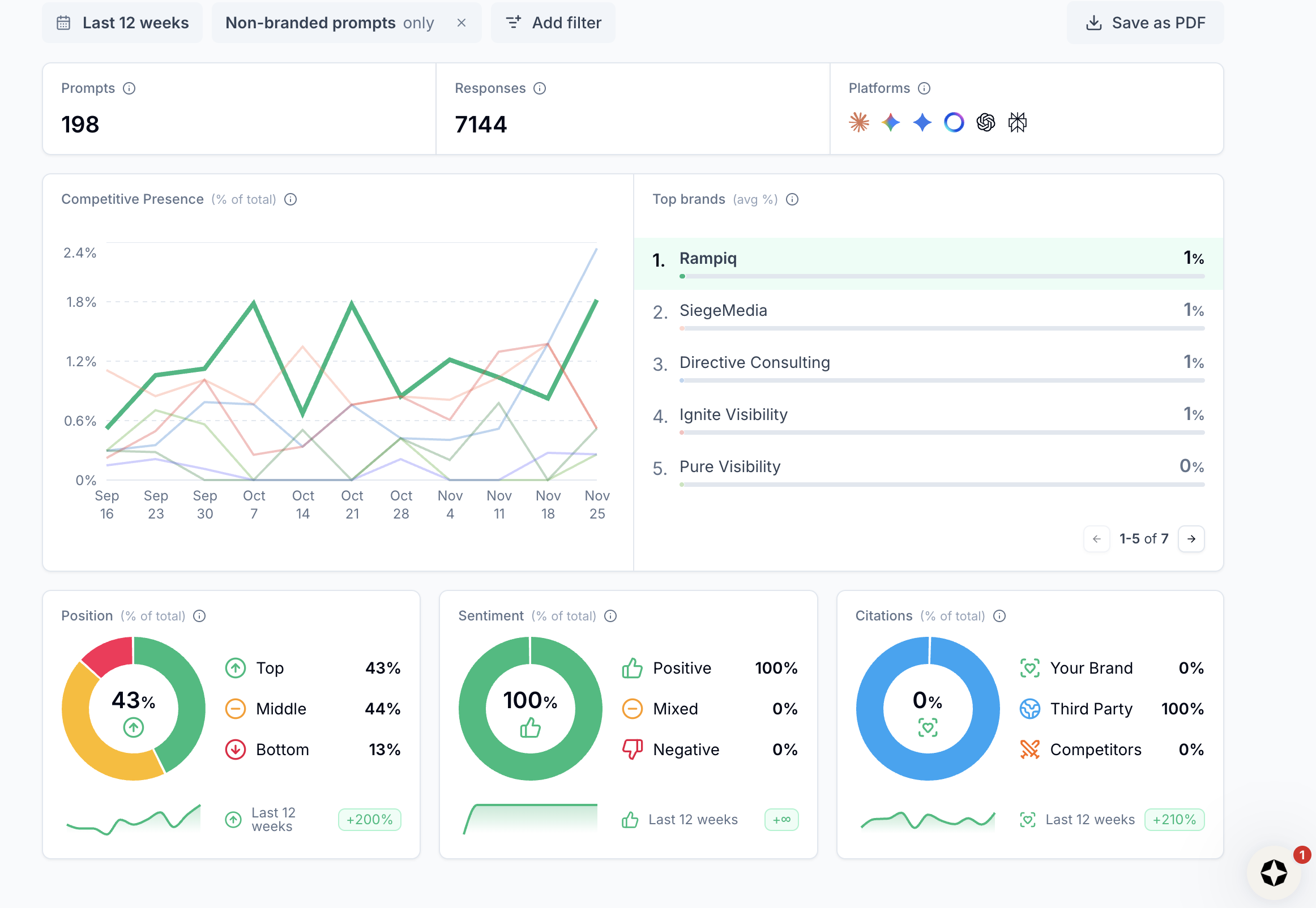

Once your domains and brands are connected, Scrunch’s Monitoring & Insights view gives you a prompt-level picture of where you’re visible, where you’re buried, and where you’re missing entirely. You can slice brand presence, performance, and position across AI engines by competitor, topic, persona, or geo – with trend lines over time. This is the view we use for monthly “are we winning the right prompts?” reviews: money prompts (high-intent buying queries), comparison prompts, and key use-case queries.

The Insights and Sources tabs are where Scrunch becomes operationally useful rather than just informational. Insights translates model behavior into plain-language recommendations, which topics to expand, where to strengthen definitions, which pages need clearer answer blocks. Sources highlights the third-party sites AI engines lean on most heavily for your topic set, which feeds directly into digital PR planning.

For teams that want to go beyond measurement, Scrunch’s AXP (Agent Experience Platform) module generates a parallel, AI-friendly version of your site. This is a structured layer that LLMs can parse more cleanly without requiring a redesign of your public-facing pages. This is a genuinely differentiated feature; none of the other tools on this list offer anything comparable.

Scrunch also monitors AI bot traffic, surfacing when and how AI crawlers hit your key pages and flagging crawl errors before they become visibility issues.

What we found in testing

The Sources data was consistently the most strategically valuable output. Knowing which publications, review sites, and industry resources ChatGPT and Perplexity were drawing from for a client’s category, and cross-referencing those against the client’s existing PR and link coverage, gave us a precise brief for authority-building work that was hard to replicate with other tools.

AI bot traffic monitoring was also useful as a sanity check when testing on-page changes. Being able to confirm that GPTBot or ClaudeBot had crawled a page after updating its structure helped us attribute subsequent visibility changes more confidently.

The workflow we developed: use Prompt Manager to load core prompts per product and ICP (solution, comparison, pain-point, and “best X for Y” prompts), let Scrunch collect data for 3-4 weeks, then use Insights to flag low-rank or missing prompts and map them to specific pages, and use Sources to build an outreach-ready list of outlets AI already trusts for that topic.

Where it falls short

The $300/mo starting price is a real threshold. For clients where AI visibility is still being validated as a business priority, it’s a hard sell before GA4 and initial audit data have already made the case. We recommend Scrunch once a brand has confirmed AI is sending measurable traffic and has completed an initial visibility audit. Starting before that means paying for data you can’t yet act on at scale.

Our verdict

One of the most operationally complete tools in this category, particularly for source-level intelligence and the AI-crawlability infrastructure of the AXP module. The price point means it makes most sense once the channel has been validated.

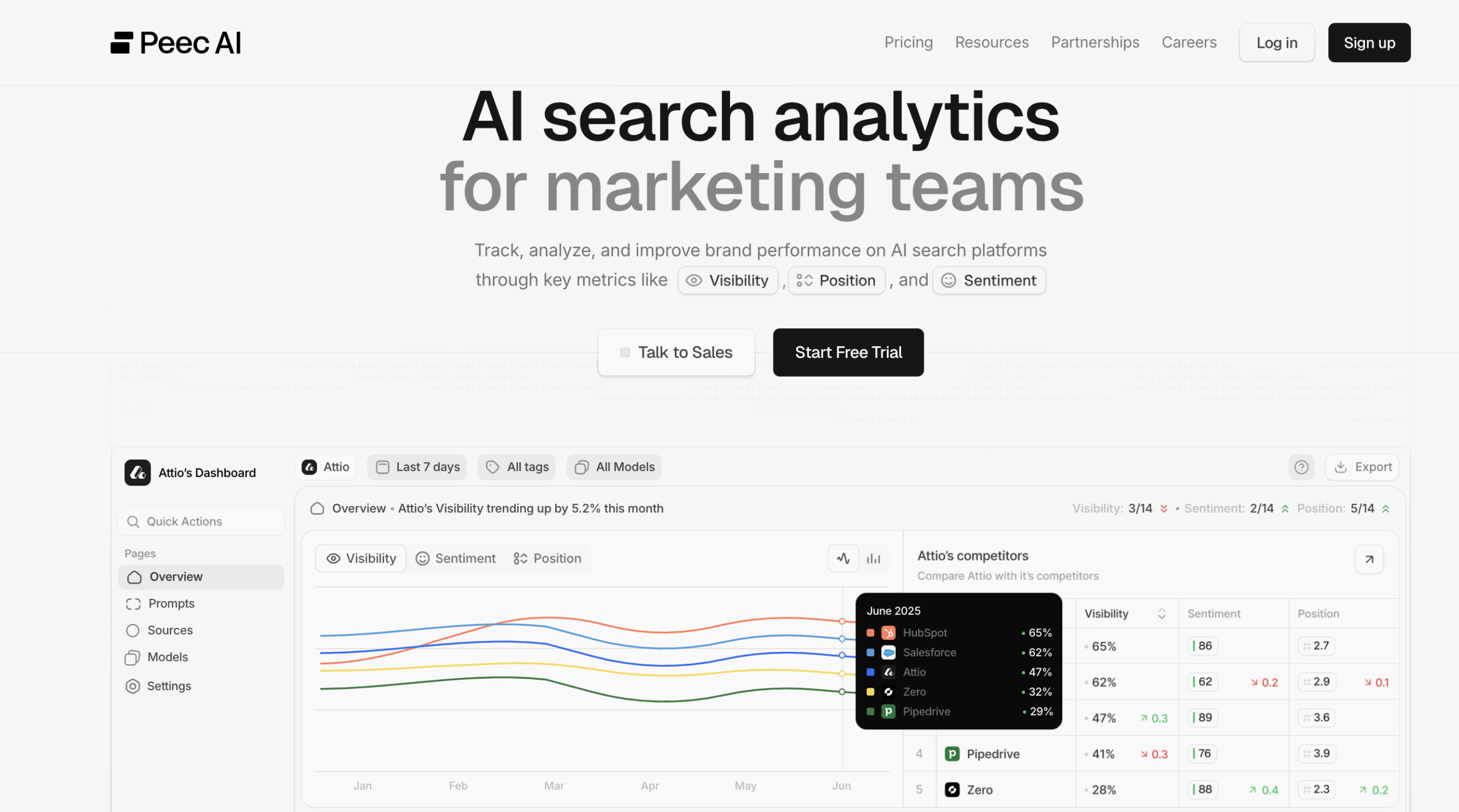

4. Peec AI – Strong for Trend and Sentiment Tracking Over Time

Pricing: From ~$104/mo, scaling with prompt volume

Engines tracked: ChatGPT, Gemini, Perplexity

Best for: Established brands with recurring AI mentions that need long-term trendlines and competitive sentiment context

Peec AI is most useful once you’ve moved past “are we visible at all?” and into tracking how your brand’s AI presence evolves over time. The branded vs. non-branded prompt segmentation cleanly separates “people asking about us by name” from “people asking about our category”. This is a useful distinction for client reporting.

The “Suggested” tab generates prompt ideas from your site’s existing keyword and topic coverage, which is a practical starting point if you’re uncertain which prompts to seed. And the team has published some of the more rigorous research on AI visibility dynamics in the market. Their analysis of correlation between Google rankings and answer engine citation rates is worth reading if you’re trying to understand how traditional SEO authority connects to AI visibility.

Where Peec AI falls short

For brands at an early stage of AI visibility with few mentions and no established trendline, Peec AI has little to surface. Prompt costs also scale in a way that can make budgets unpredictable as you add clients, and the platform is less oriented toward agency multi-client workflow than Amadora.

Our verdict

Layer Peec AI in once repeat AI mentions are confirmed. Starting it before that inflection point means paying to monitor a signal that isn’t yet strong enough to act on.

5. Semrush AI Toolkit – Best for Teams Already Inside the Semrush Ecosystem

Pricing: $99/mo add-on (requires existing Semrush plan from $139.95/mo)

Engines tracked: ChatGPT, SearchGPT, Google AI Mode, Gemini, Perplexity

Best for: Mid-market brands and agencies already invested in Semrush

Semrush moved into AI visibility faster than most legacy SEO competitors, and the toolkit shows the benefits of building on a mature data infrastructure. The brand performance reports are clean with metrics like share of voice, sentiment analysis, and competitor comparison across up to 50 competitors, inside a dashboard your team already knows.

The sentiment layer is something standalone GEO trackers often skip. Knowing not just whether your brand appears, but whether the AI is describing it positively, neutrally, or negatively, gives you a different dimension of intelligence that matters at mid-market and enterprise scale.

Where it falls short

The AI visibility module doesn’t integrate seamlessly with Semrush’s core SEO data. You’ll find yourself jumping between reports rather than seeing a unified view. Methodology isn’t fully transparent. And the total cost adds up quickly: $139.95+ for the base plan, plus $99 for the AI toolkit. For agencies, multi-client workflow is workable but not purpose-built.

Our verdict

Right if you’re a heavy Semrush user who doesn’t want another platform. Not the right foundation if AI visibility tracking is a core service you’re delivering.

6. Ahrefs Brand Radar – Best for Connecting AI Visibility to Authority and Link Data

Pricing: ~$199/mo add-on (requires existing Ahrefs plan)

Engines tracked: ChatGPT, Google AI Overviews, Perplexity

Best for: Existing Ahrefs users who want AI visibility tied to content coverage and link authority signals

Ahrefs’ major advantage is connecting AI visibility to backlink and content authority data in a way no pure-play AI prompt tracker can. If your brand isn’t appearing in AI answers, Ahrefs can help identify whether that’s a content gap, an authority gap, or a citation source gap, because it has the underlying data to draw that connection.

Still maturing in several areas, with limited historical AI trend data compared to tools that have been tracking longer. The $199/mo add-on on top of an existing Ahrefs subscription is significant.

Our verdict

Genuinely valuable for diagnosing the why behind AI visibility gaps. But better as a diagnostic tool than a client-reporting platform.

7. SE Ranking AI Visibility Suite – Best Budget Entry Point

Pricing: Included in SE Ranking plans (from ~$119/mo)

Engines tracked: Google AI Overviews, Google AI Mode, ChatGPT, Perplexity, Gemini

Best for: Smaller agencies and in-house teams watching budget, already using SE Ranking

SE Ranking’s three-part suite, including an AI Overviews tracker, AI Mode tracker, and conversational AI visibility layer. This is the most comprehensive bundled offering in the traditional SEO platform space, and the fact that it’s included rather than an add-on makes it one of the most cost-effective entry points for AI visibility tracking.

The honest limitation is that it feels like a feature set added to an SEO platform rather than a purpose-built AI visibility tool. The depth of prompt-level analysis, citation sourcing, and multi-client management doesn’t match what dedicated platforms offer.

Our verdict

Strong value for budget-conscious teams starting their AI visibility journey. Plan to graduate to a dedicated platform once GEO becomes a significant part of your work.

8. Surfer AI Tracker – Best for Existing Surfer SEO Users

Pricing: From $95/mo for 25-prompt blocks

Engines tracked: ChatGPT, Google AI Overviews/AI Mode, Perplexity

Best for: Content teams already using Surfer SEO who want AI visibility in the same workflow

Surfer’s AI Tracker connects content optimization with visibility outcomes, and the prompt-level source transparency, showing which of your pages are being cited, aligns well with how a content team thinks about fixing gaps.

Still new, with shallow historical data. Pricing scales steeply with prompt volume. For teams not already in Surfer’s ecosystem, the case for adding this on top of a full Surfer subscription is harder to make.

Bottom line

Makes real sense if Surfer is already your content infrastructure.

9. Profound – Best for Enterprise Brands with Complex Requirements

Pricing: Not publicly disclosed; enterprise contracts

Engines tracked: Broad coverage across major platforms

Best for: Fortune 500 brands and large enterprises with dedicated digital intelligence teams

Profound is closer to a brand intelligence platform than an agency workflow tool. The depth of data, the prompt discovery methodology, and the enterprise-grade compliance posture (SOC 2, GDPR, HIPAA) make it the right fit for large brands with the internal resources to act on what it surfaces. For most agencies and mid-market brands, it’s overkill and over-budget.

Our verdict

The enterprise choice. Not relevant for most agency and SMB use cases.

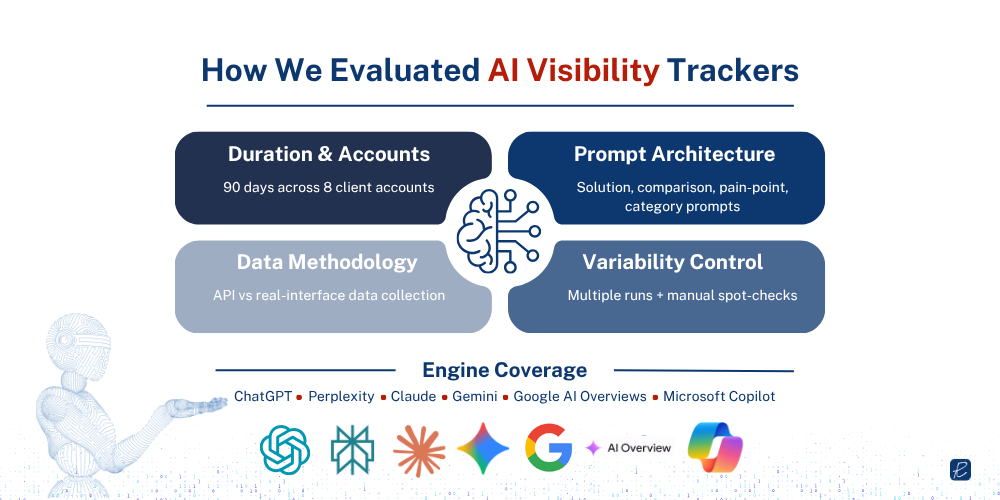

How We Tested These Tools

Before the reviews: here’s exactly how we evaluated each platform. These tools sit inside our active client workflow, and the assessment reflects months of operational use across real accounts.

Duration and Accounts

We evaluated each tool across a minimum of 90 days of active use, covering eight client accounts spanning B2B SaaS, professional services, and enterprise tech. Account sizes ranged from early-stage brands with minimal AI visibility to established SaaS brands already appearing in a meaningful share of relevant AI responses. This spread was deliberate because an AI visibility tracking tool that works well for a brand with 60% AI mention coverage may be useless for a brand starting from near-zero, and we wanted to capture both situations.

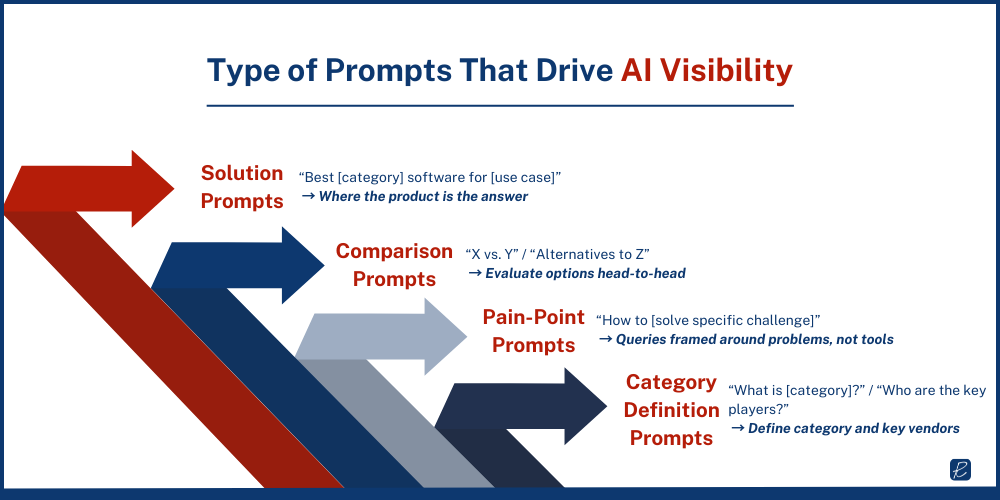

Prompt Architecture

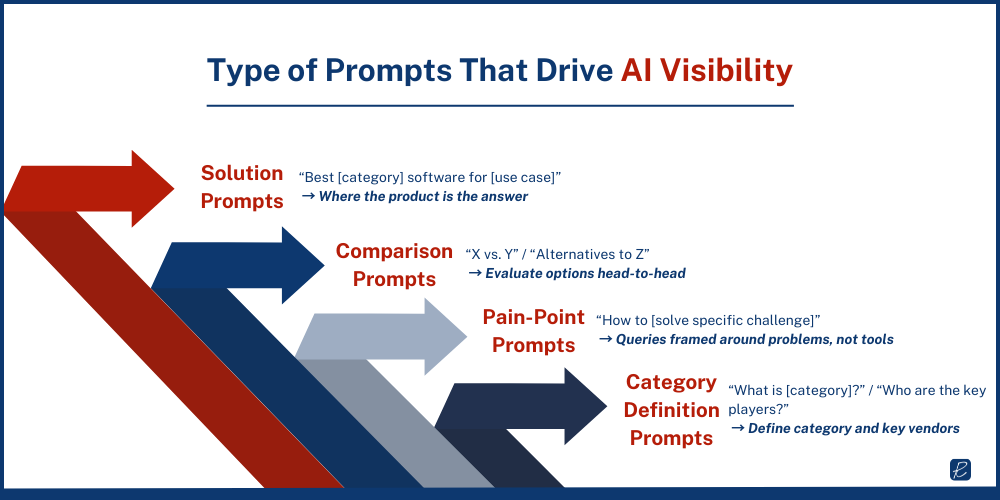

For each client, we built a structured prompt set across four categories:

- Solution prompts: queries where the client’s product is the answer (“best [category] software for [use case]”)

- Comparison prompts: queries where buyers evaluate options head-to-head (“X vs. Y,” “alternatives to Z”)

- Pain-point prompts: queries framed around problems rather than tools (“how to [solve specific challenge]”)

- Category definition prompts: broader queries establishing what a category is and who the key players are

Across the eight accounts, we tracked between 15 and 80 prompts per client depending on plan tier and category complexity. Total prompt volume across the evaluation period exceeded 4,000 tracked prompt-engine combinations.

Engine Coverage Tested

ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews, and Microsoft Copilot. Not every tool covers all five. We have noted coverage differences in each review.

Data Collection Methodology Scrutiny

For each GEO tracker reviewed, we verified whether it hits the AI engine’s API or scrapes real user interfaces. This is not a minor technical detail. API calls typically use the base model without web search enabled, meaning you’re measuring the model’s training-data behavior rather than what a user with search-enabled ChatGPT actually sees.

Tools that didn’t disclose this methodology clearly were marked down. Tools that confirmed real-interface scraping were treated as methodologically stronger.

Variability Controls

AI responses are not deterministic. The same prompt on the same day can produce different citations. To account for this, we looked at consistency across multiple runs of the same prompt before drawing conclusions. Any tool that presented single-run data as definitive rankings was treated with skepticism.

We also ran manual spot-checks, pulling the same prompts directly in each AI engine and comparing results to what the tool reported.

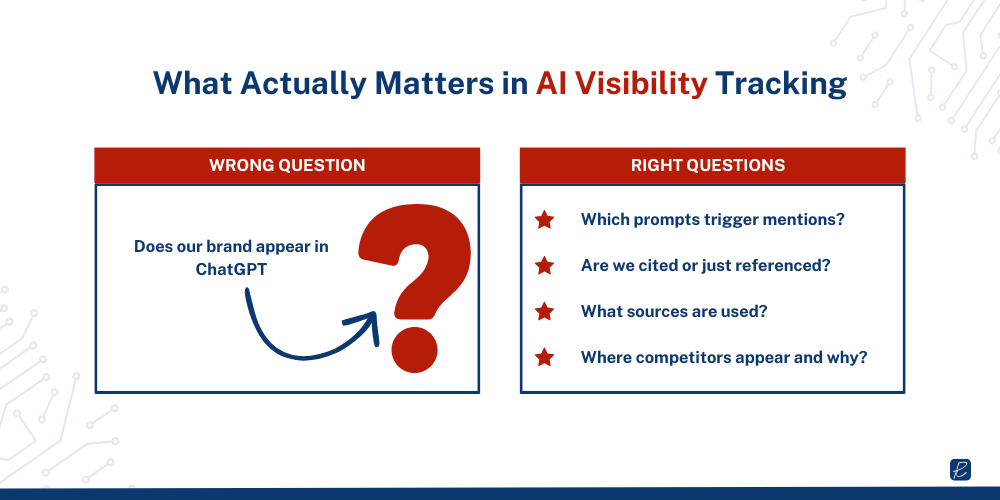

AI Visibility Tracking in 2026: What You Need to Know First

Before the tool reviews, one framing point: most SEO teams are still thinking about AI visibility the wrong way.

The question isn’t “does our brand appear in ChatGPT?” That’s a binary you can check manually in thirty seconds. The real questions are:

Which prompts trigger mentions?

Are we cited or just referenced?

What sources are the engines pulling from?

Where are our competitors showing up that we aren’t? And why?

The tools that answer those questions are genuinely useful. The ones that only return a presence score are dashboard decoration.

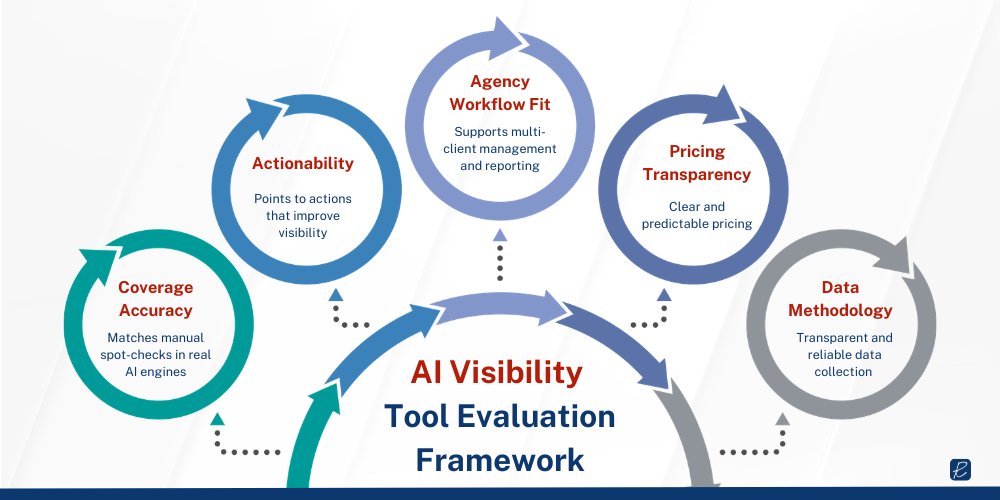

What We Scored Each Tool On

- Coverage accuracy: Does what the tool reports match manual spot-checks in the actual AI engine?

- Actionability: Does the data point to something you can actually do to improve visibility?

- Agency workflow fit: Multi-client management, reporting export quality, team collaboration

- Pricing transparency and predictability: Is the cost clear? Does it scale reasonably with prompt volume?

- Data methodology transparency: Does the tool explain how it collects data, and is that methodology sound?

With that context established, here’s how each platform performed.