Why standard SEO audits miss AI visibility problems entirely

Traditional SEO audits are built around a straightforward model: keywords, rankings, click-through rates, backlink profiles, and technical crawlability. They answer the question “how well does Google’s index treat our site?” And for organic search, that question still matters.

But AI search doesn’t work like organic search. And the metrics that tell you you’re doing well in one can completely obscure the fact that you’re invisible in the other.

Here’s where the disconnect happens.

Keyword tracking tells you whether you rank for a given term on a search engine results page. It doesn’t tell you whether ChatGPT, Perplexity, or Google’s AI Overview mentions your brand when a user asks a related question in natural language.

A company can rank #2 for “enterprise data integration platform” and still be completely absent when someone asks an AI assistant, “What are the best data integration tools for mid-size companies?”

Ranking position assumes a fixed list. But ranking positions are becoming obsolete. AI answers have mentions, citations, and recommendations instead. There’s no page 2 in an AI response. You’re either in the answer or you’re out of it.

Backlink profiles measure authority signals that Google’s algorithm weighs. LLMs pull from a different, partially overlapping set of sources. A site with a strong backlink profile but thin, poorly structured content may rank well organically while remaining invisible to AI models that prioritize well-organized, clearly stated information.

Click-through rate is irrelevant in zero-click AI responses. A user can receive a complete answer without visiting your site, yet the brands mentioned in that response still capture attention and influence decisions.

The practical effect is that a B2B company can perform well across traditional SEO metrics while remaining invisible in AI-driven discovery.

Standard audits don’t flag this because they aren’t designed to. This is why AI visibility requires its own dedicated measurement framework, a distinct set of AI visibility metrics that track presence, accuracy, and citation behavior across LLM-powered platforms.

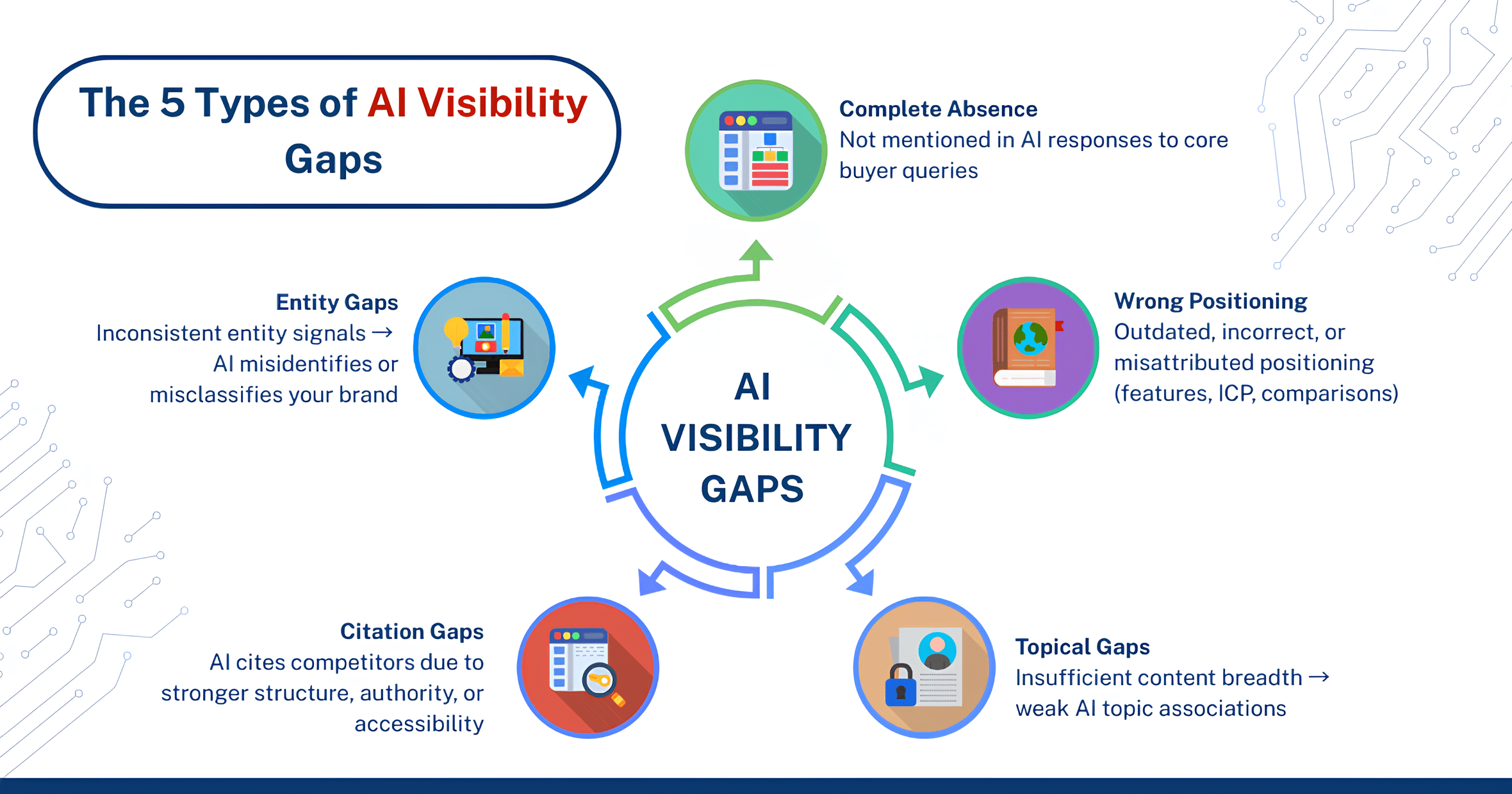

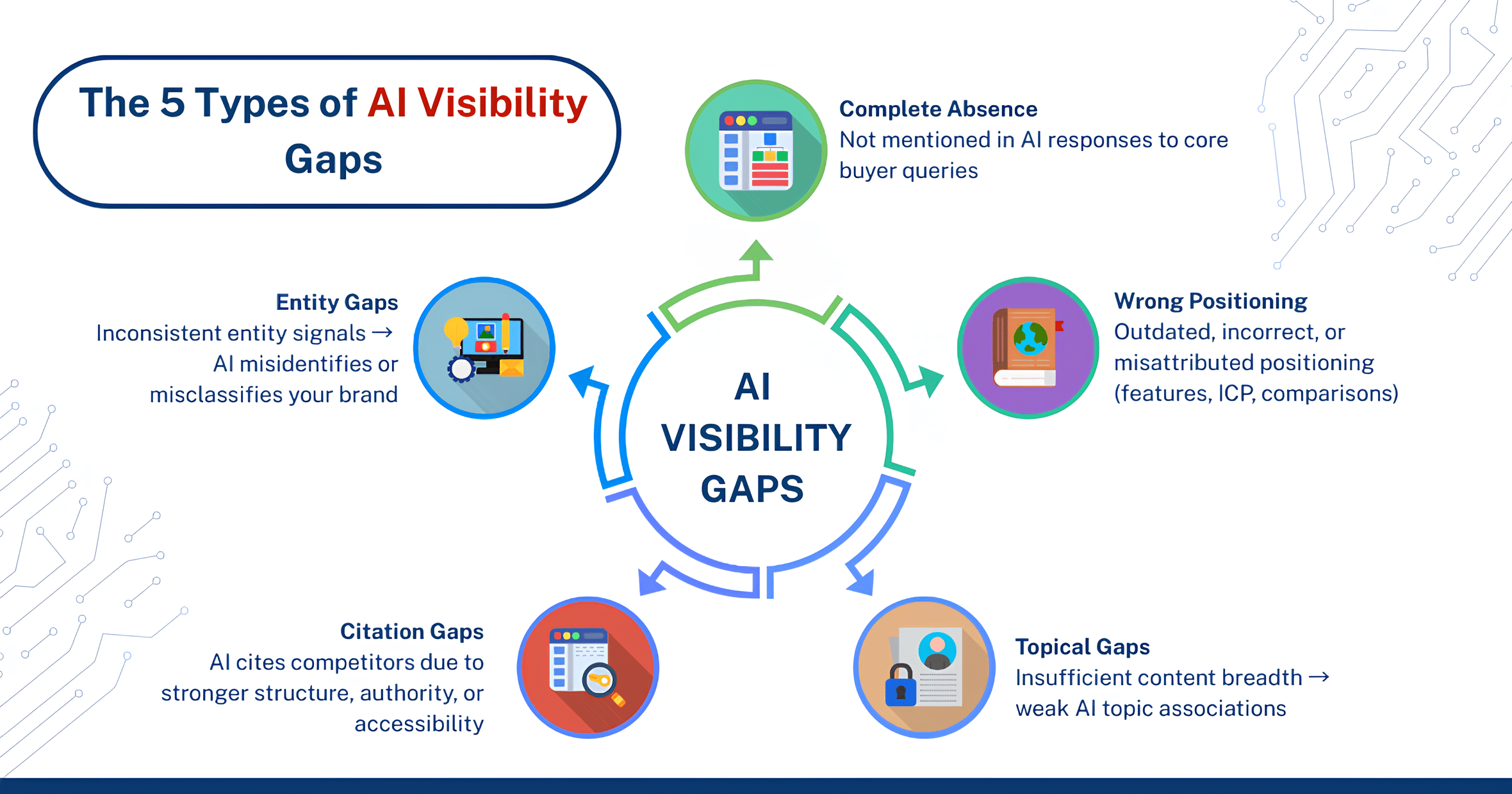

Types of AI visibility gaps and how to spot them

Not all AI visibility gaps look the same. Some are cases where your brand does not appear at all. In others, you appear but with incorrect positioning, outdated information, or missing context that shifts buyers toward competitors.

Here is how we categorize the gaps most commonly identified in B2B AI visibility audits, along with the signals that reveal them.

1. Complete absence from category queries

This is the most straightforward gap. You ask an AI tool a category-level question that should include your brand, and it doesn’t.

Test this directly using the same questions your buyers ask early in their research:

“What are the best [your category] tools for [your ICP use case]?”

“Which [your category] platforms work well for [specific industry/company size]?”

“Compare the top [your category] solutions.”

If your brand does not appear in responses, it indicates that either the AI does not have sufficient structured information about your brand in the relevant context, or competitors have stronger entity associations across the sources these systems rely on.

This is one of the most common gaps identified during AI visibility audits.

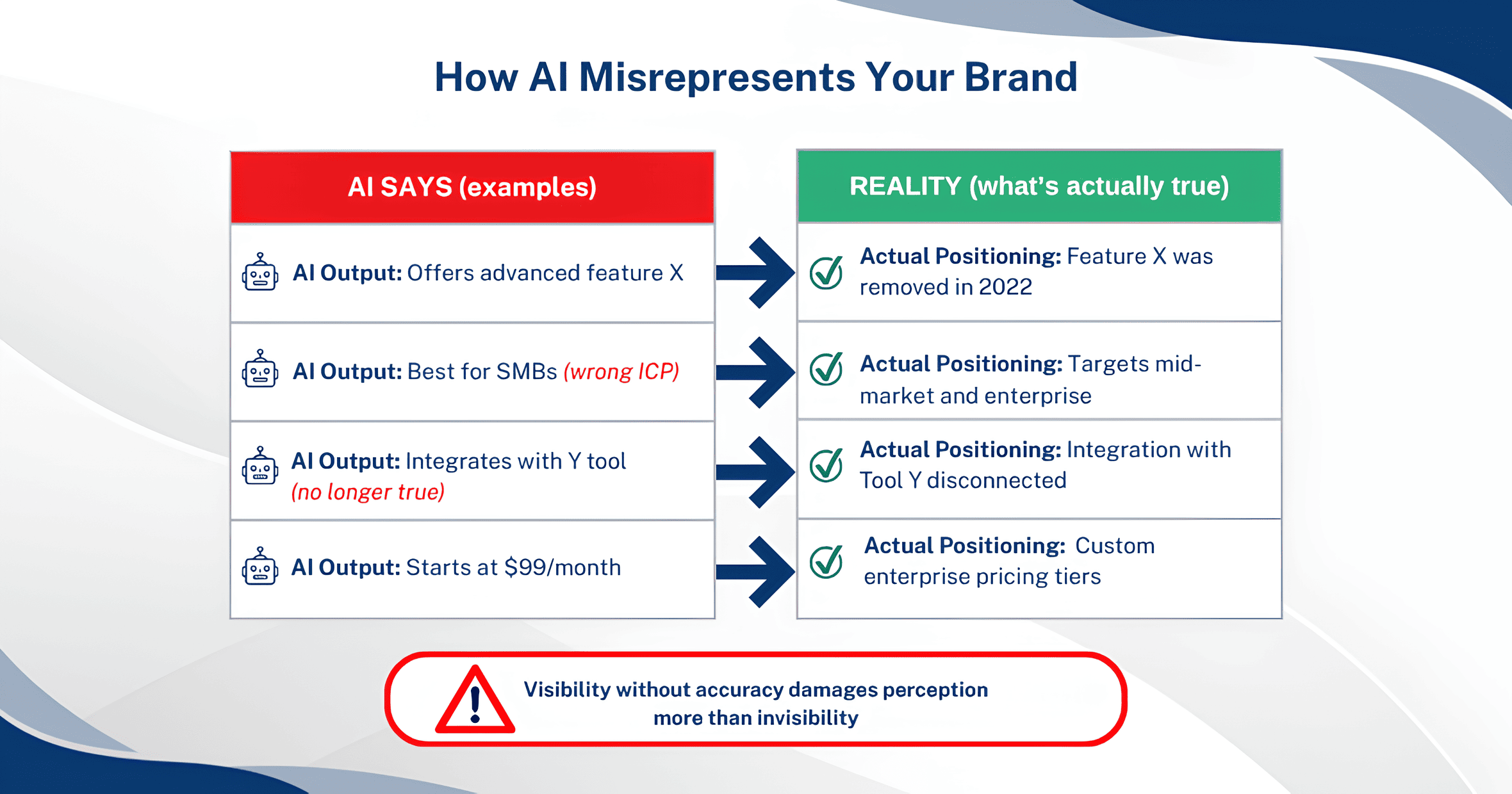

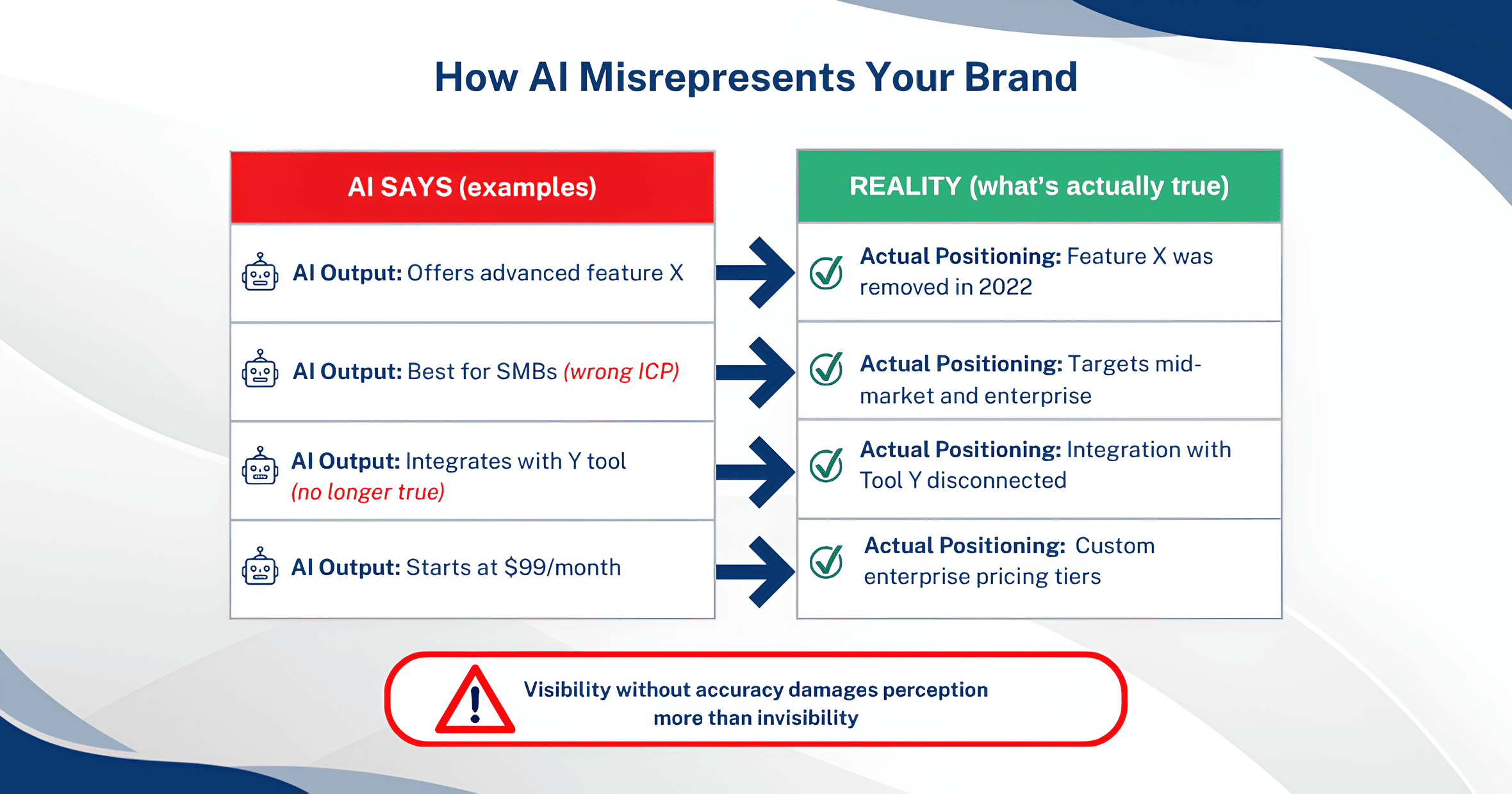

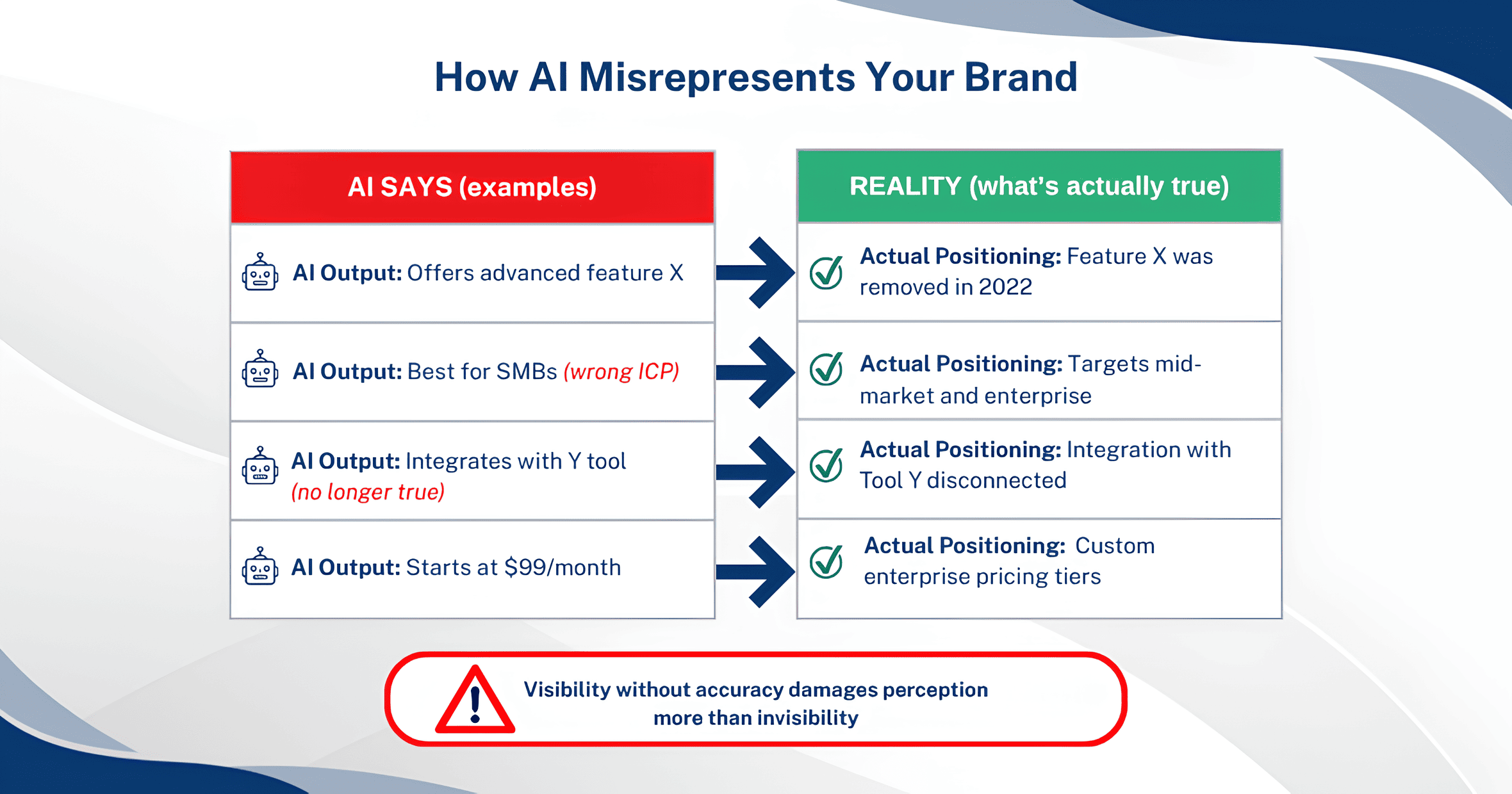

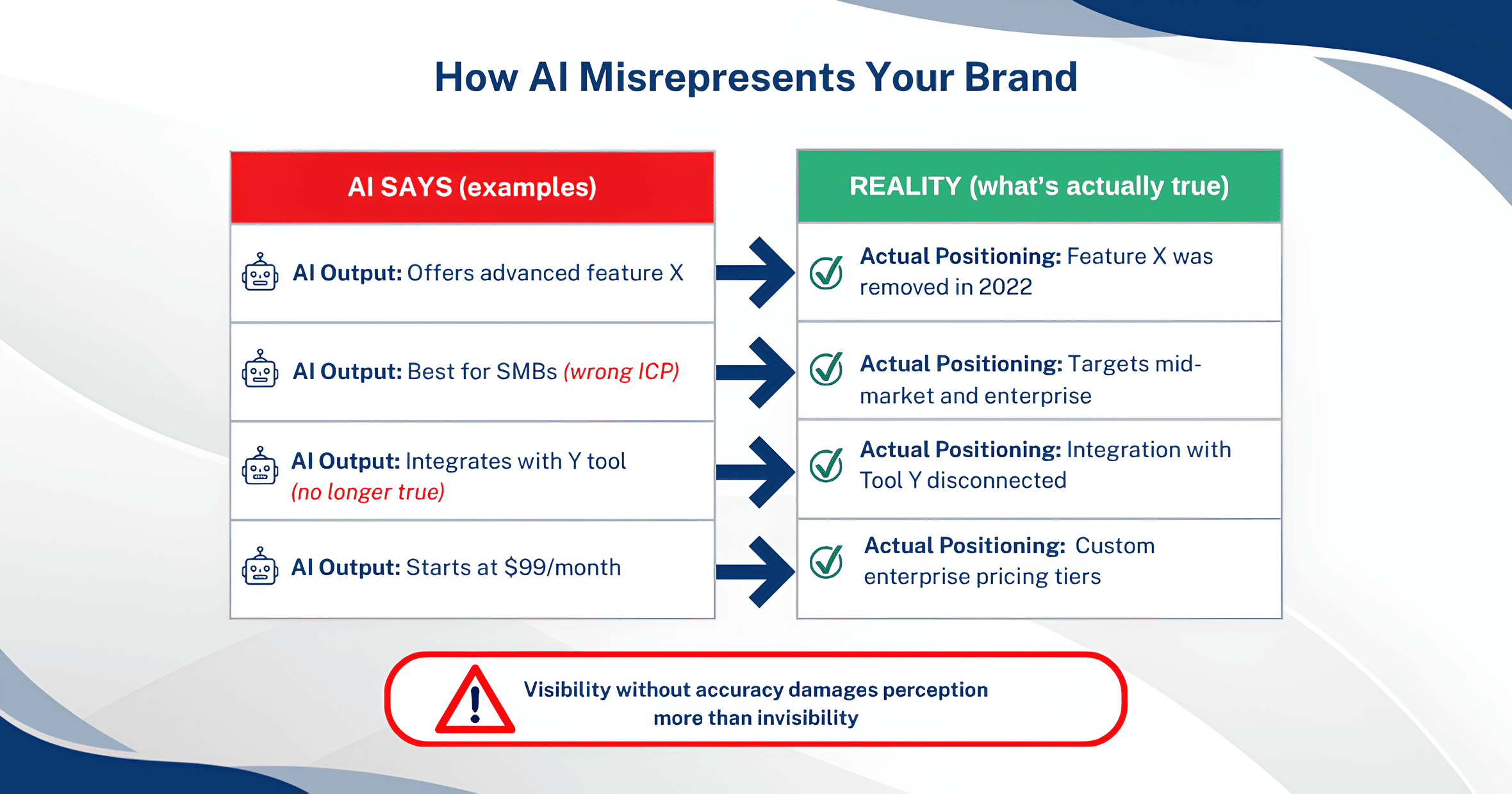

2. Presence with wrong, outdated, or misattributed positioning

Sometimes appearing in AI responses is worse than not appearing at all, that is, if what the AI says about you is wrong.

We’ve seen LLMs describe a client’s product using feature sets they deprecated years ago. Or AI tools attribute a competitor’s capability to our client’s brand (and vice versa). We’ve seen pricing described inaccurately, integration partnerships listed that no longer exist, and target markets defined too narrowly based on outdated content that still lives on third-party review sites.

To spot these gaps, you need to go beyond “are we mentioned?” and actually evaluate what’s being said. Run the same category and product-level prompts across multiple AI platforms and record the specific claims each one makes about your brand. Then cross-reference those claims against your current positioning.

Pay attention to:

- Feature descriptions that don’t match your current product

- Market positioning that reflects old messaging or a previous ICP

- Competitive comparisons where your differentiators are missing or understated

- Factual errors about pricing tiers, company size, founding date, integrations, or certifications

This type of gap is often overlooked internally but directly impacts how buyers interpret your offering.

3. Topical coverage gaps

LLMs associate brands with topics based on the breadth and depth of content connected to them. If your content covers only part of your expertise, AI systems reflect that limited understanding.

A B2B cybersecurity company, for example, might have deep content on endpoint detection but almost nothing published about their cloud security capabilities. When a buyer asks an AI about cloud security solutions, that company won’t surface; not because the AI is wrong, but because there’s insufficient topical signal to support the association.

To audit for topical coverage gaps, map your full range of products, services, use cases, and areas of expertise. Then systematically prompt AI tools with questions spanning each of those areas. Where you consistently don’t appear, you’ve found a topical gap – an area where your content footprint is too thin for AI models to confidently associate your brand with that topic.

This audit often reveals a mismatch between what a company actually does and what the content on (and about) their website communicates. That mismatch predates AI search, but AI search makes the consequences more visible and more immediate.

4. Citation source gaps

AI platforms frequently cite sources. Citation source gaps appear when AI tools reference your topic but cite competitors, industry publications, or third-party sites instead of your own content, even when your content covers the same ground.

The question to ask is, when an AI platform answers a question in your domain and cites sources, are any of those sources yours?

If the answer is consistently no, it usually means one of several things:

- Your content isn’t structured in a way that LLMs find easy to extract and cite. Long, meandering articles without clear definitions, direct answers, or well-organized sections get passed over in favor of content that states things plainly.

- Your content lives on a domain with lower perceived authority for that specific topic. A startup with strong product content may still lose citations to an established industry publication that covers the same topic with less depth but more domain authority.

- Your content isn’t accessible to AI crawlers. JavaScript-rendered pages, content locked behind authentication, or pages that block AI-specific user agents may be functionally invisible to some LLMs regardless of quality.

Tracking citation patterns shows whether AI systems treat your content as a source of truth or rely on external validation instead.

This is a key signal used to prioritize content and authority improvements.

5. Structured data and entity gaps

LLMs and AI-powered search features rely heavily on entity recognition. This is the ability to understand that your brand name refers to a specific company, that your product names refer to distinct offerings, and that these entities connect to specific categories, use cases, and attributes.

Structured data (schema markup, knowledge graph presence, consistent entity information across the web) helps AI models resolve these associations correctly. When it’s missing or inconsistent, AI platforms either misidentify your brand, confuse it with similarly named entities, or fail to associate it with the right category entirely.

Gaps here can look like:

- Your brand being confused with another company or product that shares part of your name.

- Your product categories being described incorrectly because your schema markup doesn’t specify what you are.

- Your company attributes like headquarters, founding year, key leadership being pulled from outdated or incorrect sources because there’s no authoritative structured data to anchor them.

To audit this, check your Google Knowledge Panel (if one exists), review your schema markup implementation for Organization, Product, and Service types, and verify that your entity information is consistent across your website, Wikidata, Crunchbase, LinkedIn, and other platforms that LLMs commonly reference for entity data.

Auditing AI search visibility

The individual gap types above are components. Here’s how to bring them together into a structured audit.

Step 1: Build your prompt library

Start with the questions your actual buyers ask. Pull from sales call transcripts, customer interviews, and support tickets. Organize them by funnel stage – category exploration, solution comparison, feature-specific questions, and implementation/integration queries. You may need around 20-30 prompts to get a meaningful picture, and ideally more for complex products or multi-segment ICPs.

Step 2: Run prompts across platforms systematically

Test each prompt on ChatGPT, Claude, Grok, Perplexity, Google AI Overviews, and Gemini. Record the full response, noting whether your brand appears, what’s said about you, which competitors are mentioned, and which sources are cited.

To do this manually, run the prompt in clean sessions (no logged-in accounts, no conversation history) to avoid personalization bias.

Running this manually is time-consuming. In practice, we use tools like Amadora to run prompts at scale, track responses, and identify visibility gaps systematically.

Step 3: Categorize what you find

Map each response to the gap types above. You’ll likely find a mix of the various gaps we’ve discussed. The pattern you identify is more important than any individual result. If you’re consistently absent from early-funnel category queries but present in specific feature comparisons, that tells you something different than being absent everywhere.

Step 4: Identify the source-level causes

For each gap, trace backward to the likely cause. Is the content missing entirely? Is it present but poorly structured? Is it on a page that AI crawlers can’t access? Is it contradicted by third-party content that the AI treats as more authoritative? This is where the audit transitions from diagnosis to actionable roadmap.

Step 5: Benchmark against competitors

An AI visibility audit in isolation only tells you where you stand. Benchmarking against two or three key competitors shows you where you’re losing ground and where competitors have vulnerabilities you can exploit. If a competitor is being cited for a topic where your expertise is deeper, that’s a gap you can close with the right content and optimization strategy.

The output of this process should be a prioritized list of gaps, ranked by business impact, and effort to close.